TLDR: Don't forget that a larger portion of the Steam Deck's power budget is being eaten by the CPU compared to the Switch, then in turn the Switch's GPU gets a higher percentage compared to the Steam Deck.

Rest of this post is just expanding on the above point:

For mobile Zen 2, I'll be using two charts from

here.

Look at the red line of dashes for mobile Zen 2.

Key assumption 1: I'm assuming 4 cores to match up with the rest (and also for the charts to make sense, especially its performance relative to 4 desktop Zen 2 cores).

So far as the author can manage, it looks like we see the power draw range from as low as ~3.2 watts to ~26 watts, or about ~0.8-~6.5 watts per core.

This one tells us the range in clock frequency that the author managed to find. About ~1.7 ghz to 4 ghz. So let's map the end points; ~3.2 watts to ~1.7 ghz and ~26 watts to 4 ghz. But what about in between?

(disclosure: observant readers looking at the page I linked to will notice that the 7-zip compress chart has a slight difference in the range of power drawn. Clocks still look to have the same range. That's an example of different workloads stress different parts of the CPU, leading to differences in total power used despite the same frequency. Keep in mind that it's probably the case that for gaming, your power draw at a given frequency would be slightly lower compared to video transcoding, albeit not significantly enough to change the message)

Going back to the first chart, the perf/power curve looks like a reasonable shape. I also know that transcoding with x264 is generally understood to have performance (ie FPS) scale linearly with threads.

Key assumption 2: Ergo, I assume that the relationship between clock and perf is linear.

Starting with the end points... it's ~3.2 FPS at its slowest and about... ~6.3 FPS at its fastest. So let's map ~1.7 ghz to ~3.2 FPS and 4 ghz to ~6.3 FPS. Then, we can see that ~4/1.7 times the clock gets ~6.3/3.2 times the perf. Then if it's straight line, the scaling factor would be... x~=6.3 / 3.2 * 1.7 / 4 ~= 0.837.

Checking back with wikipedia, Steam Deck's listed with CPU frequency being 2.4 to 3.5 ghz...

2.4/1.7 * 0.837 ~= 1.182. Then I multiply that by 3.2 FPS to get ~3.781 FPS. On the chart, that's about... 4.6-4.7 watts (or 1.15-1.175 watts per core).

(btw, if it were 8 cores and thus not even 0.6 watts per core to hit 2.4 ghz, that would outdo

ARM on a N7 node, and that certainly fails a sanity check)

3.5/1.7 * 0.837 ~= 1.723. Then multiply by 3.2 FPS to get ~5.514 FPS. On the chart, that's about... 10.7-10.8 watts (or 2.675 to 2.7 watts per core).

What about the Switch?

That's a lot easier!

Remember that Erista is on TSMC's 20nm node. What would make for a reasonable comparison? Hmm...how about something on Samsung's 20nm node? It's not gonna be exactly the same, but it'll be close enough for the purposes of this post.

From

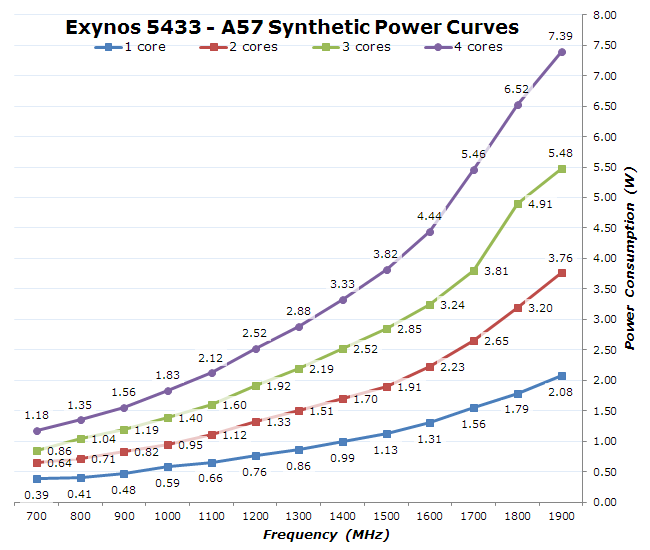

this page, we have this chart that longtime readers of this thread may remember:

Quad A57 cores @1 ghz uses about 1.83 watts.

Personally, I expect Drake to target about 2 watts or less for the CPU. And that's fine. ARM Cortex-A cores shoot for 1 watt or less per core. The A78 specifically targets 3 ghz@1 watt on a 5 nm process (or so ARM promotional material says). Sure, 8 A78@3 ghz would then take up ~8 watts themselves. But we know that's not happening. Napkin math says slash the frequency in half to get quarter the power as a rough guesstimate, so you may be able to hit ~1.5 ghz at 2 watts on base N5. And that's ignoring that the core reserved for OS doesn't necessarily have to be at the same frequency as the rest, which could free up some centiwatts to spreads among the rest.

On a N7 node, ARM says A77 hits 2.6 ghz@1 watt. A78's slightly more efficient (-4% power) even on the same process, so let's stick with that number. Again, napkin math says half frequency/quarter power, so 8 A78 at 1.3 ghz should be doable within 2 watts.

And in total, the watts you save here on the CPU can be shifted over to the GPU. A few watts here gets you a few more deciwatts per SM.