NintenDuvo

Bob-omb

- Pronouns

- He him

I really hope we get some new information coming out of the GDC, while the leak was fun it stills feel like there is so many other missing components

We need a new Bloomberg article to hit. Starting last March it felt like there was a new article on the next Switch every 2-3 weeks.I really hope we get some new information coming out of the GDC, while the leak was fun it stills feel like there is so many other missing components

Bloomberg probably wants to double/ triple/ quadruple check before they release another article.We need a new Bloomberg article to hit. Starting last March it felt like there was a new article on the next Switch every 2-3 weeks.

If it's coming this fall, Bloomberg's sources will start hearing about things going into motion on the manufacturing side.

If we get to May though and haven't heard anything then I doubt it's coming this year.

Bloomberg probably wants to double/ triple/ quadruple check before they release another article.

This will be followed by an official response the next day by Nintendo vaguely denying the claims. Then an hour later the US patent office will publish a patent submitted by Nintendo for an in-house version of ray tracing.Can’t wait for the amount of snark they’ll throw in this one.

“At least 20 developers now working on titles for a 4K Switch that does not exist. Many aim to release these new titles on the non-existent platform by late 2022.”

Ehh, I say moreso Nintendo's next finanical meeting covering FY23 would be the make or break for Drake 2022/2023If a new model is coming this year then we should hear some rumblings of it at GDC right? If nothing comes out of that it’s likely nothing will be launching in 2022?

Well 88GB/s would be the ram bandwidthThanks Thraktor. At least, Nintendo seems to have given this issue thoughts in their design. So I guess they are doing as much as we can expect from them.

And I am sorry for @-ing you @brainchild but based on the calculations from Thraktor, could a SoC that has a memory bandwidth of 88 GB/s run Nanite? We all know now that one would need speeds above 500 MB/s to stream data from the hard drive but can the bandwidth be a bottleneck?

I'll preface that question as usual by saying that I am a total noob in this domain. I am grateful for each answer I receive.

The only rumors we have heard have said late this year with a chance to slip into early next year.I've been out of the loop but are people expecting a new model to launch this year? I was assuming 2023 for some reason.

The only reason why I mentioned 1080p displays is because that seems to be the lowest resolution required to have VRR support on displays already available (e.g. iPhone 13 Pro, iPhone 13 Pro Max).120Hz would still be a crazy high power draw (and at 1080p? very high). not to mention the cpu and gpu costs

Yeah the battery life differences are ridiculous on Steam Deck when going from 30fps to 60fps. Basically halves your battery life at 60fps. 120fps would be nuts.120Hz would still be a crazy high power draw (and at 1080p? very high). not to mention the cpu and gpu costs

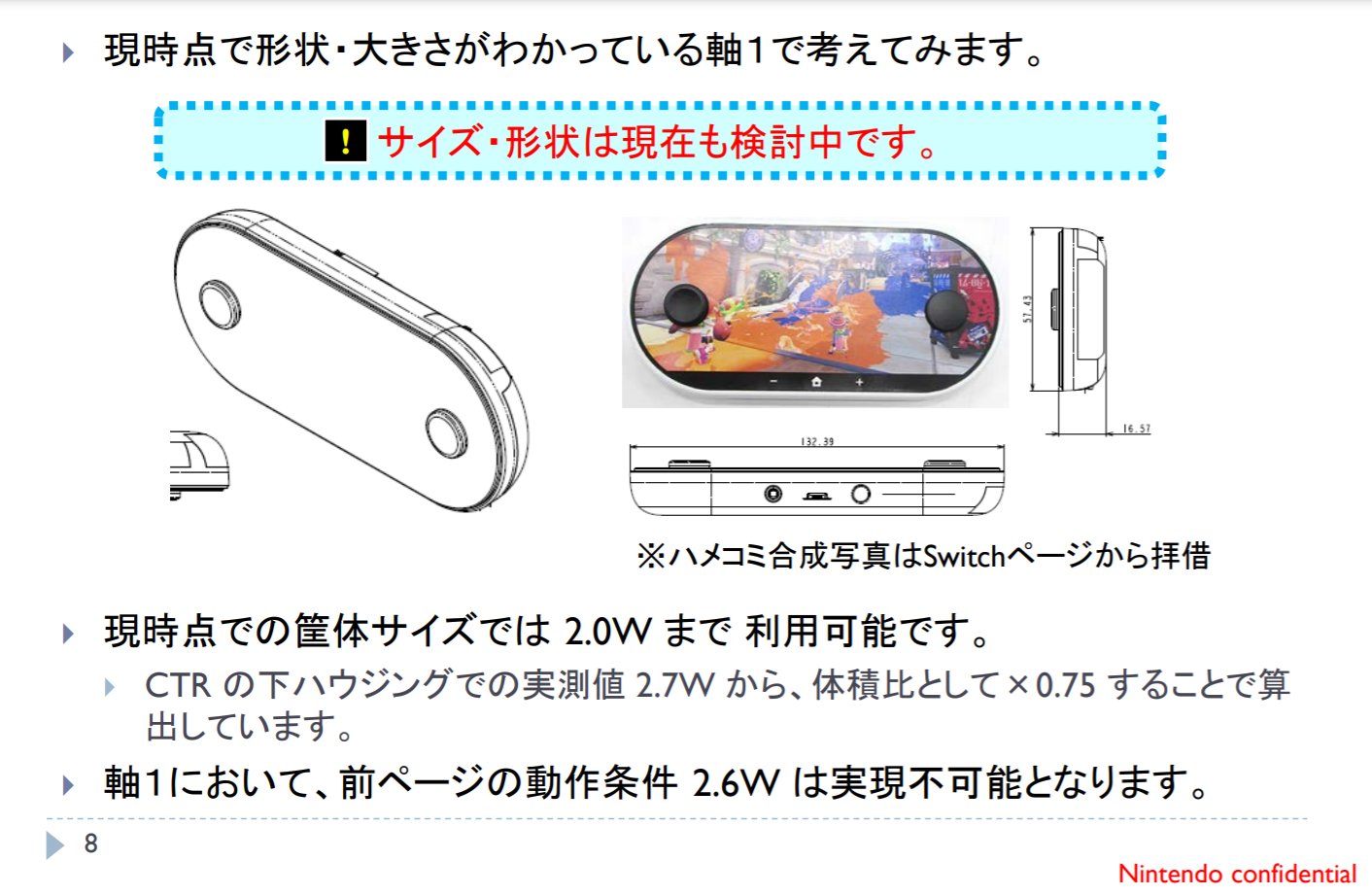

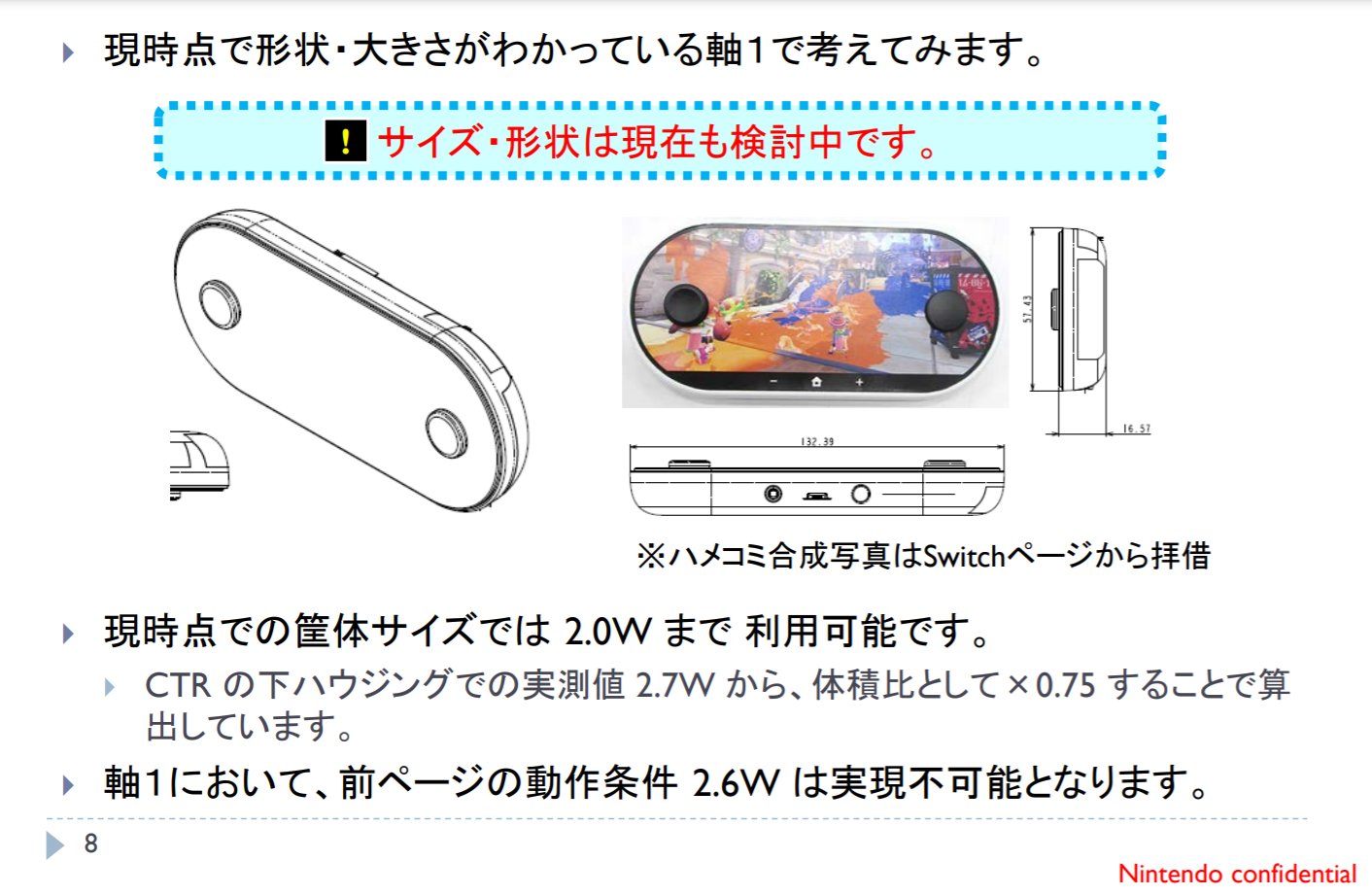

So a YouTube named RGD uploaded an informative and fascinating video about the hardware that was originally the successor to the Nintendo 3DS, but ultimately became the Nintendo Switch, called Project Indy.

One fact that intrigued me is that Nintendo actually considered using a 120 Hz display for Project Indy. That fact makes me believe that Nintendo going for a 1080p display with a refresh rate of 120 Hz is not as unlikely as originally thought, especially if Nintendo wants to enable VRR support for TV mode and handheld mode. (Granted I don't think the possibility is very high.)

So a YouTube named RGD uploaded an informative and fascinating video about the hardware that was originally the successor to the Nintendo 3DS, but ultimately became the Nintendo Switch, called Project Indy.

One fact that intrigued me is that Nintendo actually considered using a 120 Hz display for Project Indy. That fact makes me believe that Nintendo going for a 1080p display with a refresh rate of 120 Hz is not as unlikely as originally thought, especially if Nintendo wants to enable VRR support for TV mode and handheld mode. (Granted I don't think the possibility is very high.)

We own a switch, a console with gimmicks attached to it and integral to its design, and we are here speculating on a switch but better, some even thinking of different ideas for the controllers, others as per the silicon of the switch, others about improving the overall experience, and you think most of us aren’t at the very least open-minded to other gimmicks? LolI wonder... would people have prefered this Switch to the current one we ended up getting? What would the alternate timeline have looked like?

(The real question being "How many people here are actually against such 'gimmicks'?" I would presume the folks in Famiboard would be a bit more...open-minded.)

You'd be surprised about how many people I've seen post here hate Nintendo to return to its "gimmick roots" as it were. Some people just want feature parity like the other two companies all the way down to copying achievements/trophies, to even naming the successor "Nintendo Switch Pro" or "Nintendo Switch 2"We own a switch, a console with gimmicks attached to it and integral to its design, and we are here speculating on a switch but better, some even thinking of different ideas for the controllers, others as per the silicon of the switch, others about improving the overall experience, and you think most of us aren’t at the very least open-minded to other gimmicks? Lol

Personally, I’d like to see more VR/AR integration.

I think Nintendo (shall they go for a high-refresh panel) would probably be interested in a 40Hz mode for running their games with DLSS.Due to power draw reasons, I’d suspect that if Drake has support for 120Hz, it would be mainly a docked feature and for very select titles.

Ehh, I say moreso Nintendo's next finanical meeting covering FY23 would be the make or break for Drake 2022/2023

So that says to me it's a cost consideration to go with the standard Ampere tensor cores.But the double-wide Orin tensor cores would be the more efficient option in performance per Watt if Nintendo's looking purely for DLSS performance. Let's say that you had a 6 SM part based on desktop Ampere and wanted to double the tensor core performance. If you switched to Orin's double-wide tensor cores, then you're approximately doubling the power consumption from tensor cores*, and that's about it. However, if you kept the standard tensor cores, but doubled up on the number of SMs, you're doubling the power consumption for everything. Tensor core power consumption doubles, because you've got two of them, but you're also adding extra standard CUDA cores, extra RT cores, extra texture units, extra control logic, and all the additional wiring, logic and associated power consumption from moving data and instructions to and between these units.

* I'd actually assume the power consumption of Orin's tensor cores is less than double the power consumption of standard Ampere tensor cores, as while you're doubling the ALU width, there'll be a certain proportion of instruction decode and control logic which won't be doubled.

See, this thinking inherently suggests that because it's a small percentage of revenue, they can afford to spend it regardless of return because it's a small percentage, but I can tell you with absolute certainty that Nintendo doesn't think this way, because they have first-hand experience with revenue being a moving target, something that can shrink drastically in a matter of a year, as you yourself acknowledge. Hell, most corporations don't operate this way. Project expenses aren't weighed against current revenues, they're weighed against the revenues and profits that project is projected to generate. Every dollar spent is a risk taken to make money, so for every dollar spent, they want that dollar back and more; anything else would be considered profit loss. A 10 billion yen R&D spending increase is still a 10 billion yen spending increase, regardless of how that's represented as a percentage of revenue. It's not more or less conservative based on what they raked in for the year. The only thing in question is whether those costs are properly returned with an appropriate increase to profits. And spending on what qualifies as next generation hybrid hardware development for iterative successor sales figures? That ain't wise spending.Who is saying they are throwing away money?

All I’m saying is that any company spending 50% of their yearly revenue on new hardware R&D is taking a far FAR greater risk on invenstment than a company spending 5% of their yearly revenue on R&D. Doesn’t matter if the latter R&D amount is more than the former, the above is still true. This shouldn’t be a controversial statement.

One would also say, that if a company at one time was spending 50% of their revenue on R&D, and is now spending 5%, we would call that a company being far more conservative in investment spending, actually.

So, Nintendo spending on R&D for Drake is arguabley Nintendo being more conservative lol. They will actually waste less of their profits on Drake R&D than they did for Mariko.

Nvidia has been more than OK to still be making Maxwell architecture chips through to 2023 (or 2027 as you suggest, if we're to believe that this is an iterative revision released alongside current Switch models to elongate its hardware cycle). That'd be 8 (or 12, again as you suggest) years from when the Tegra X1 was first commercially used.I think if that werent the case, and Nvidia was ok still making Volta architecture chips through 2027 and that was cost effective…we would have seen that. I’m guessing it isn’t. Heck, people here have even intimated the idea that maybe fewer SM’s and 8nm might not have been enough to function overall well enough.

We can agree to disagree, but I’m positive the hardware usage that they ended up with was more out of necessity to get everything DLSS related working adequately with the caveats Nintendo creates for themselves with Switch hardware.

We’re talking about hardware to render Switch games in 4K and you bring up… a PS4/PS5/PC exclusive? Huh? What a red herring you decided to throw into the conversation. How about we look into what’s required to achieve upscaling with Switch games themselves, hmm?Look, the RTX 2060 is the lowest CUDA offering by Nvidia to attempt DLSS. That’s 1920 CUDA running at 1.37 ghz. and draws 175W.

That can play a 2019 game at 4K/60fps…but only in performance mode. Starts to suffer beyond that.

And you want me to think Drakes 1536 CUDA at 1GHz with a 25W power draw is someone bordering on grotesque overkill to play games at 4K/60fps? Why?

Go look at the 3050ti DLSS capable laptop gpu. That’s supremely grotesque compared to Drake lol

…

What’s the minimum amount of CUDA cores running at 1 ghz with 25W is needed to get…say…Death Stranding running at 4K/DLSS in performance mode? I genuinely don’t know.

As I mentioned above, the RTX 2060 barely does this.

Unless... y'know, what you think they're designing (an iterative revision) isn't actually what they're designing and that's why it didn't materialize. So the absence of evidence is not really "proof", is it?The proof that Nintendo/Nvidia couldn’t make a cheaper, more efficient SoC to get 4K/60fps DLSS gaming in is in the fact that it never materialized. If there were such a possibility, it would have happened.

I'm sure Nintendo saw the value in DLSS as well, but Ampere isn't required to achieve it. And since you think this hardware is only to last 4 years and will not be sustaining its own software library as an iterative revision to be replaced by a successor in 2027, there's no "future-proofing" required. Saying it needs to be future-proofed is actually working against your own argument that this is merely a 4-year stopgap.shrugs you say demand, I say incentivize.

I’m pretty sure Nvidia greatly informed Nintendo their best options to get Swifch mobile DLSS gaming workable and sustainable for 2022-2027 or so.

Nintendo went for the overall best option. They can afford to spend a bit more on a model to future proof it better. Their current success and the knowledge that investing in this same ecosystem is important to maintain that.

I’m sure Nvidia convinced Nintendo on the value of DLSS.

They weren’t bamboozled by Nvidia on anything lol

I think Alovon means we night get a sense of whether they're releasing new hardware at that meeting based on the forecast, or better yet if someone asks Furukawa and he says "we cannot announce anything at this time" or similar.Now I'm still not entirely sold that it will release next FY.

But they dont really need to highlight it in any fashion when covering their upcoming FY, just like they didnt do with Lite, OLED or even the original Switch the FY the year it launched.

Nobody said they have to highlight. We see how Furukawa responds to investors. His phrasing of words will make it clear if it’s coming. Idc what anyone else says other than him. It’s his product he knows when it’s coming and he’ll answer for it in a roundabout way.Now I'm still not entirely sold that it will release next FY.

But they dont really need to highlight it in any fashion when covering their upcoming FY, just like they didnt do with Lite, OLED or even the original Switch the FY the year it launched.

Same.Personally, I do want them to explore the Miracast/wireless streaming ideas more, though latency will be an ongoing issue, which might make the "docked" solution still viable (that and having a higher power profile).

So a YouTube named RGD uploaded an informative and fascinating video about the hardware that was originally the successor to the Nintendo 3DS, but ultimately became the Nintendo Switch, called Project Indy.

One fact that intrigued me is that Nintendo actually considered using a 120 Hz display for Project Indy. That fact makes me believe that Nintendo going for a 1080p display with a refresh rate of 120 Hz is not as unlikely as originally thought, especially if Nintendo wants to enable VRR support for TV mode and handheld mode. (Granted I don't think the possibility is very high.)

I guess because of this.Uh, why is that the thumbnail?

semianalysis.com

semianalysis.com

⋮

In addition, Apple will need to increase memory bandwidth for their SOC. They have been able to stay on LPDDR4x for 5 generations by increasing on die cache sizes and improving utilization. With the A16, the step up to LPDDR5 brings a large cost increase. Due to the way the 3 major DRAM companies have slowly increased output, cost per bit of DRAM has not really fallen. Memory prices are a major drag on computing.

The iPhone 13 ships with 4GB of LPDDR4x, moving to 6GB of LPDDR5 and to the larger A16 chipset would be cost prohibitive. This would result in more than a $40 increase in bill of materials (BOM). With numerous supply chain disruptions and cost increases for energy and commodities, it would be a difficult pill for Apple to also swallow the ballooning silicon costs as well. Apple could also increase prices, but that likely would eat into sales volumes.

⋮

Maybe Nintendo fans would have liked it but I doubt it would be the sucess Switch is.I wonder... would people have prefered this Switch to the current one we ended up getting? What would the alternate timeline have looked like?

(The real question being "How many people here are actually against such 'gimmicks'?" I would presume the folks in Famiboard would be a bit more...open-minded.)

Because he infer from the leaked docs and designs that this Indy console would have the design of that infamous patent, which was used for the fake NX rumor.Uh, why is that the thumbnail?

I would have loved this design. Seems like more ergonomic than current Switch. Maybe a redesigned Switch Lite down the line.I guess because of this.

You'd be surprised about how many people I've seen post here hate Nintendo to return to its "gimmick roots" as it were. Some people just want feature parity like the other two companies all the way down to copying achievements/trophies, to even naming the successor "Nintendo Switch Pro" or "Nintendo Switch 2".

Personally, I do want them to explore the Miracast/wireless streaming ideas more, though latency will be an ongoing issue, which might make the "docked" solution still viable (that and having a higher power profile).

mmm, maybe:Same.

I'm simply speculating here, but maybe Miracast or a similar feature could be used for a Nintendo Switch Lite model equipped with Drake as the SoC, but with the max resolution limited to the max resolution of the display, for battery life and latency reasons.

As I always say, if a patent application from Nintendo is published prior to the invention/concept being revealed or announced officially, it's very unlikely that it will ever be used.mmm, maybe:

us20220040571Patents

www.patentguru.com

Not what you have in mind, but they seem to want to do something here.

Personally I'm not too perturbed by the prospect of a premium Drake Switch being priced at $450+ (and perhaps a dock/accessory-less SKU for $400). It's actually a good product strategy to target more market segments via price/product differentiations.

What baffles me, however, is that the current Lite and hybrid models may not have enough legs to last for more than a couple more years, and sooner than later they will need to be upgraded to the new platform standard (Drake SoC, wider memory, faster storage, etc.) while ideally maintaining their respective price points at $200 and $300-$350. Looking at the rather high-end specs being speculated in this thread, I'm not sure how that could be accomplished.

Even if we humor this idea that Nintendo somehow manages to release a Drake-capable Lite and hybrid for $200-$350, it'd destroy the value of the $450 premium Switch and alienate their most loyal early adopters (owners of the $450 Drake).

A potential solution to this conundrum is to double down on the price/product differentiations by introducing two Switch tiers a la Xbox Series X and S. But unlike MS who released both tiers simultaneously, Nintendo would introduce the premium tier (let's call it Switch DX for now) first to capture the enthusiast/early adopter segment, and the standard tier 2-3 years later for the mainstream/casual markets.

Switch DX Switch S Switch Lite S 2022-2023 2024-2025 2025-2026 Drake (12 SM, 8 A78), 128-256GB Dane (6 SM, 4-6 A78), 64GB Binned Dane, 32GB Hybrid + enthusiast features (e.g., live streaming) Hybrid Handheld only

IMO the staggered release of tiered products is a superior strategy because it not only allows Nintendo to extract more value from the higher-end segment sooner, but also drives down production costs and builds a larger software catalog in advance of the lower tier launch.

While this speculated lineup may seem unlikely, it isn't impossible either for the following reasons:

If this model works out, Nintendo may sustain it by introducing the Switch 2DX in, say, 2027-2028 with a new SoC and new gimmicks; 2-3 years later, the Switch 2S and Lite 2S may follow with a die-shrunk/refreshed Drake. Rinse and repeat for a tick-tock iterative succession plan.

- The mysteriously disappeared Dane may make a triumphant return

- Orin ADAS (5w-10w) and Jetson Nano Next are being released in 2023; could be related to Dane

- The software compatible layer developed for Drake to run TX1 titles might simplify the support for yet another SoC (Dane)

- Cross-gen games that run on both TX1 and Drake should be able to support Dane easily

- Drake exclusive games already give up on the 100 million TX1 installed base; it's no great loss if they aren't patched to support Dane

Edit: typo

So that says to me it's a cost consideration to go with the standard Ampere tensor cores.

In hardware design, performance, power efficiency and cost are the holy trinity of considerations, so if these double-wide tensor cores are more performant and more power efficient, the only other consideration left unaccounted for is cost. (size constraint is another factor specific to a hybrid, but I have doubts that these double-wide tensor cores you mentioned would drastically increase die size on the SoC)

My thinking would be that the boost in performance and power efficiency from these double-wide tensor cores come with a pretty hefty cost and Nintendo found more cost-efficient ways to boost performance and save power in lieu of better tensor cores, while still ensuring they have what they need to achieve what they're after for DLSS and anything else they intend to do with them.

I guess because of this.

As Moore’s Law Slows, Apple Is Forced To Use Cheaper Chipsets In Non-Pro iPhones – Terrifying Implications For The Semiconductor Industry

The economic benefit of shrinking semiconductors has ground to a halt and Apple is going to use the same process node for the 3rd year in a row. This unprecedented slowdown in scaling has terrifyin…semianalysis.com

Let’s not get greedy. If you had told us the details we got were coming a month ago we’d have said we’d have been happy until the official revealI really hope we get some new information coming out of the GDC, while the leak was fun it stills feel like there is so many other missing components

That's so true hahaIf you had told us the details we got were coming a month ago we’d have said we’d have been happy until the official reveal

Just to frame exceptations better, what preqreuisities must be fulfilled (in terms of clock speeds, cache, node and RAM) for Drake to be as powerful as a GTX 960/GTX 1050Ti, and is there a realistic scenario in which it can match their rasterization performance?

For the record, here are the specs:

GeForce GTX 960

TSMC 28 nm

Die size 398mm2

1280 cores

Base clock core 924 MHz

4096 MB RAM GDDR5

120 GB/s bandwidth

Bus width 192 bit

L2 cache size 1.5 MB

2.3 TFlops

145 W

GeForce GTX 1050 Ti

Samsung 14 Nm FinFET

Die size 132mm2

768 cores (6 SM)

Base core clock 1290 MHz

4096 MB RAM GDDR5

Bus width 128 bit

L2 cache size 1MB

1.9 TFlops

75 W

If Drake is anything like these, then we are in for a ride. A good one I might add.

My guess is because Android smartphone manufacturers use less RAM chips than Apple.So, is too expensive for Apple to use LPDDR5/x but it is widely used by Android phones? Is this because the ultra wide Apple architecture?

Depends on when Nintendo plans on launching new hardware for the first question. And no for the second question.Do we usually get any hardware related news at GDC? Also, has Bloomberg stated anything after the Nvidia leak?

Not actual news, but it is generally a pretty ideal environment for spreading rumors around.Do we usually get any hardware related news at GDC? Also, has Bloomberg stated anything after the Nvidia leak?

Yeah. I have hard times figuring out why Apple does that, but I'm sure they have a good reason.My guess is because Android smartphone manufacturers use less RAM chips than Apple.

Xiaomi seems to use one 64-bit LPDDR5 chip for the Xiaomi Mi 10.

Apple on the other hand uses four 16-bit 1.5 GB LPPDR4X chips for 64-bit 6 GB of LPDDR4X.

GDC is in person this year, so it's gonna be the first time in a while where a lot of developers come and talk/share rumors and such. We're not expecting anything official, but if something is really releasing within the next 12 months it would be very surprising if we don't hear any rumblings about it shortly after GDC.Do we usually get any hardware related news at GDC? Also, has Bloomberg stated anything after the Nvidia leak?

Ah, this makes senseNote actual news, but it is generally a pretty ideal environment for spreading rumors around.

Oh! I see, hopefully we do get something, even if small. I'd love it if this Switch launches end 2022 beginnings of 2023, I do need another Switch but I'd like to know if there is possibility of the new one launching soon so I save for that one instead. And yeah, you are totally correct, I forgot that it was a ransomware attack for a moment there.GDC is in person this year, so it's gonna be the first time in a while where a lot of developers come and talk/share rumors and such. We're not expecting anything official, but if something is really releasing within the next 12 months it would be very surprising if we don't hear any rumblings about it shortly after GDC.

As for Bloomberg, I don't think most major publications will touch illegally leaked data with a 10 foot pole.

the fact that this thing used a modified Wii U gpu...I imagine if the Wii U was more successful we could have seen a timeline where they built off of that ecosystem... sharing a GPU arch would have sort of been a step in that "unifying development" they were undergoing at the time. Pretty fascinating to think about.I wonder... would people have prefered this Switch to the current one we ended up getting? What would the alternate timeline have looked like?

(The real question being "How many people here are actually against such 'gimmicks'?" I would presume the folks in Famiboard would be a bit more...open-minded.)

So, is too expensive for Apple to use LPDDR5/x but it is widely used by Android phones? Is this because the ultra wide Apple architecture?

Ars Technica reported on the leak and the demands of the leakers.GDC is in person this year, so it's gonna be the first time in a while where a lot of developers come and talk/share rumors and such. We're not expecting anything official, but if something is really releasing within the next 12 months it would be very surprising if we don't hear any rumblings about it shortly after GDC.

As for Bloomberg, I don't think most major publications will touch illegally leaked data with a 10 foot pole.