NintenDuvo

Bob-omb

- Pronouns

- He him

For me personally, as long as the Next Switch has at least DLSS 2.0 i think we will be fine

Hey Nate, in your opinion what are the odds Nintendo is talking about the next device in private at Gamescom?No one (or outlet) is going to rush to share or report anything they get told during Gamescom.

*checks*How romantic.

double checks with Merriam-Webster

(which definition(s) am I using?)

He's bringing in the 3D graphs!This post is a great explainer, but there are some fundamental differences between denoising and antialiasing that are worth clarifying. For example, I wouldn't say that anti-aliasing is deleting "real" detail. Both noise and aliasing are sampling artifacts, but their origin is very different. I'll elaborate on why:

Spatial frequency

Just like how signals that vary in time have a frequency, so do signals that vary in space. A really simple signal in time is something like sin(2 * pi * t). It's easy to imagine a similar signal in 2D space: sin(2 * pi * x) * sin(2 * pi * y). I'll have Wolfram Alpha plot it:

The important thing to notice here is that the frequency content in x and y separable. You could have a function that has a higher frequency in x then in y, like sin(5 * pi * x) * sin(2 * pi * y):

So just like time frequency has dimensions 1/[Time], spatial frequency is a 1-dimensional concept with dimensions 1/[Length]. The way the signal varies with x and the way it varies with y are independent. That's true in 1D, 2D, 3D... N-D, but we care about 2D because images are 2D signals.

What is aliasing, really?

Those sine functions above are continuous; you know exactly what the value is at every point you can imagine. But a digital image is discrete; it's made up of a finite number of equally spaced points. To make a discrete signal out of a continuous signal, you have to sample each point on the grid. If you want to take that discrete signal back to a continuous signal, then you have to reconstruct the original signal.

Ideally, that reconstruction would be perfect. A signal is called band-limited if the highest frequency is finite. For example, in digital music, we think of most signals as band-limited to the frequency that the human ears can hear, which is generally accepted to be around 20,000 Hz. A very important theory in digital signal processing, called the Nyquist-Shannon theorem, says that you can reconstruct a band-limited signal perfectly if you sample at more than twice the highest frequency in the signal. That's why music with a 44 kHz sampling rate is considered lossless; 44 kHz is more than twice the limit of human hearing at 20 kHz, so the audio signal can be perfectly reconstructed.

When you sample at less than twice the highest frequency, it's no longer possible to perfectly reconstruct the original signal. Instead, you get an aliased representation of the data. The sampling rate of a digital image is the resolution of the sensor, in a camera, or of the display in a computer-rendered image. This sampling rate needs to be high enough to correctly represent the information that you want to capture/display; otherwise, you will get aliasing.

By the way, this tells us why we get diminishing returns with increasing resolution. Since the x and y components of the signal are separable, quadrupling the "resolution" in the sense of the number of pixels (for example, going from 1080p to 2160p) only doubles the Nyquist frequency in x and in y.

So why does aliasing get explained as "jagged edges" so often? Well, any discontinuity, like a geometric edge, in an image is essentially an infinite frequency. With an infinite frequency, the signal is not band-limited, and there's no frequency that can satisfy the Nyquist-Shannon theorem. It's impossible to get perfect reconstruction. (https://pbr-book.org/3ed-2018/Sampling_and_Reconstruction/Sampling_Theory) But you can also have aliasing without a discontinuity, when the spatial resolution is too low to represent a signal (this is the reason why texture mipmaps exist; lower resolution mipmaps are low-pass filtered to remove high frequency content, preventing aliasing).

You can even have temporal aliasing in a game, when the framerate is too low to represent something moving quickly (for example, imagine a particle oscillating between 2 positions at 30 Hz; if your game is rendering at less than 60 fps, then by the Nyquist-Shannon theorem, the motion of the particle will be temporally aliased).

So what do we do to get around aliasing?

The best solution, from an image quality perspective, is to low-pass filter the signal before sampling it. Which yes, does essentially mean blurring it. For a continuous signal, the best function is called the sinc function, because it acts as a perfect low pass filter in frequency space. But the sinc function is infinite, so the best you can do in discrete space is to use a finite approximation. That, with some hand-waving, is what Lanczos filtering is, which (plus some extra functionality to handle contrast at the edges and the like) is how FSR handles reconstruction. Samples of the scene are collected in each frame, warped by the motion vectors, then filtered to reconstruct as much of the higher frequency information as possible.

The old-school methods of anti-aliasing, like supersampling and MSAA, worked similarly. You take more samples than you need (in the case of MSAA, you do it selectively near edges), then low-pass filter them to generate a final image without aliasing. By the way, even though it seems like an intuitive choice, the averaging filter (e.g. taking 4 4K pixels and averaging them to a single 1080p pixel) is actually kind of a shitty low-pass filter, because it introduces ringing artifacts in frequency space. Lanczos is much better.

An alternative way to do the filtering is to use a convolutional neural network (specifically, a convolutional autoencoder). DLDSR is a low-pass filter for spatial supersampling, and of course, DLSS does reconstruction. These are preferable to Lanczos because, since the signal is discrete and not band-limited, there's no perfect analytical filter for reconstruction. Instead of doing contrast-adaptive shenanigans like FSR does, you can just train a neural network to do the work. (And, by the way, if Lanczos is the ideal filter, then the neural network will learn to reproduce Lanczos, because a neural network is a universal function approximator; with enough nodes, it can learn any function.). Internally, the convolutional neural network downsamples the image several times while learning relevant features about the image, then you use the learned features to reconstruct the output image.

What's different about ray tracing, from a signal processing perspective?

(I have no professional background in rendering. I do work that involves image processing, so I know more about that. But I have done some reading about this for fun, so let's go).

When light hits a surface, some amount of it is transmitted, and some amount is scattered. To calculate the emitted light, you have to solve what's called the light transport equation, which is essentially an integral over some function that describes how the material emits light. But in most cases, this equation does not have an exact, analytic solution. Instead, you need to use a numerical approximation.

Monte Carlo algorithms numerically approximate an integral by randomly sampling over the integration domain. Path tracing is the application of a Monte Carlo algorithm to the light transport equation. Because you are randomly sampling, you get image noise, which converges with more random samples. But if you have a good denoising algorithm, you can reduce the number of samples for convergence. Unsurprisingly, convolutional autoencoders are also very good at this (because again, universal function approximators). Again, I'm not in this field, but I mean, Nvidia's published on it before (https://research.nvidia.com/publica...n-monte-carlo-image-sequences-using-recurrent). It's out there!

And yes, you can have aliasing in ray-traced images. If you took all the ray samples from the same pixel grid, and you happen to come across any high-frequency information, it would be aliased. So instead, you can randomly distribute the Monte Carlo samples, using some sampling algorithm (https://www.pbr-book.org/3ed-2018/Monte_Carlo_Integration/Careful_Sample_Placement).

Once you have the samples, DLSS was already very similar in structure to a denoising algorithm. If, for example, the Halton sampling algorithm (https://pbr-book.org/3ed-2018/Sampling_and_Reconstruction/The_Halton_Sampler) for distributing Monte Carlo samples sounds familiar, it's because it's the algorithm that Nvidia recommends for subpixel jittering in DLSS. So temporal upscalers like DLSS already exploit random distribution to sample and reconstruct higher frequency information. So it makes sense to combine the DLSS reconstruction passes for rasterized and ray traced samples because, in many ways, the way the data are structured and processed is very similar.

tl;dr

Aliasing is an artifact of undersampling a high-frequency signal. Good anti-aliasing methods filter out the high frequency information before sampling to remove aliasing from the signal. Temporal reconstruction methods, like DLSS and FSR, use randomly jittered samples collected over multiple frames to reconstruct high frequency image content.

Noise in ray tracing is an artifact of randomly sampling rays using a Monte Carlo algorithm. Instead of taking large numbers of random samples, denoising algorithms attempt to reconstruct the signal from a noisy input.

as far as I know, the Meta Quest 2 is a SD 865. apple is on a different level because of how they handle their softwareA lot of times we are comparing the NG Switch with the Steam Deck, which makes sense consider they are both dedicated gaming hardware with similar form factors.

But considering they are all ARM, has anyone done any productive spec and graphical power comparisons of NG Switch with M1 in iPad and MacBooks and/or Meta Quest 2 and 3?

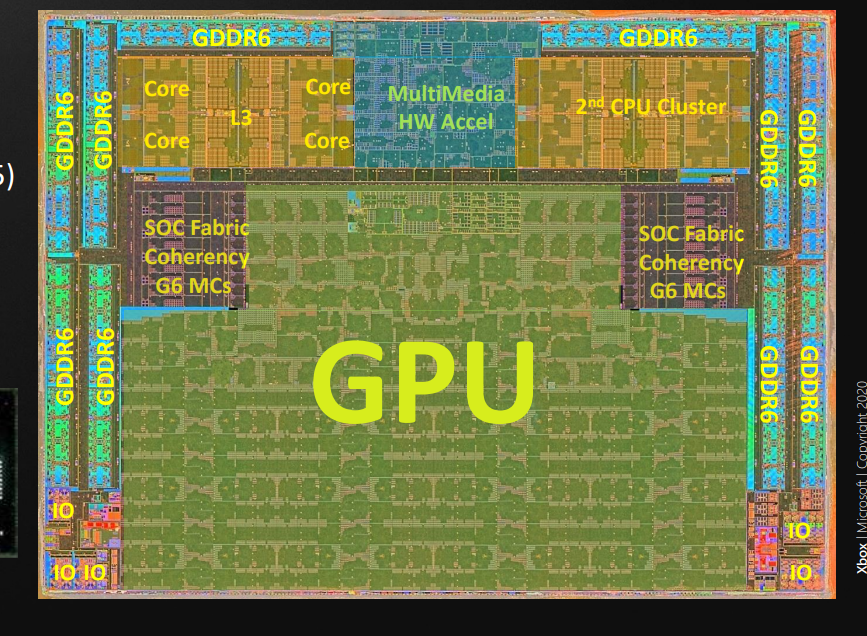

Lets just look at the PS5 and Switch 2 from the specs we have, it's simple to figure out if this is a next gen product or a "pro" model.

PS4 uses GCN from 2011 as it's GPU architecture, it has 1.84TFLOPs available to it. (We will use GCN as a base) The performance of GCN is a factor of 1

Switch uses Maxwell v3 from 2015, it has 0.393TFLOPs available to it. The performance of Maxwell V3 is a factor of 1.4 + mixed precision for games that use this... This means when docked Switch is capable of 550GFLOPs to 825GFLOPs GCN, still a little less than half the GPU performance of PS4, this doesn't factor in far lower bandwidth, RAM amount or CPU performance, all of which sit around 30-33% of the PS4, with the GPU somewhere around 45% when completely optimized.

PS5 uses RDNA1.X, customized in part by Sony, introduced with the PS5 in 2020, it has up to 10.2TFLOPs available to it. The performance of RDNA 1.X is a factor of 1.2 + mixed precision (though this is limited to AI in use cases, developers just don't use mixed precision atm for console or PC gaming, it's used heavily in mobile and in Switch development though). This means ultimately that the PS5's GPU is about 6.64 times as powerful as the PS4, and around 3 times the PS4 Pro.

Switch 2 uses Ampere, specifically GA10F, which is a custom GPU architecture that will be introduced with the Switch 2 in 2024 (hopefully), it has 3.456TFLOPs available to it. The performance of Ampere is a factor of 1.2 + mixed precision* (this uses the tensor cores, and is independent of the shader cores). Mixed precision offers 5.2TFLOPs to 6TFLOPs. It also reserves 1/4th of the tensor cores for DLSS according to our estimates, much like the PS5 using FSR2, this allows the device to render the scene at 1/4th the resolution of the output with minimal loss to image quality, greatly boosting available GPU performance, and allowing the device to force a 4K image.

When comparing these numbers to PS4 GCN, Switch 2 has 4.14TFLOPs to 7.2TFLOPs, and PS5 12.24TFLOPs GCN equivalent, meaning that Switch 2 will do somewhere between 34% to 40% of PS5. It should also manage RT performance, and while PS5 will use some of that 10.2TFLOPs to do FSR2, Switch 2 can freely use the remaining 1/4th of it's tensor cores to manage DLSS. Ultimately there are other bottlenecks, the CPU is only going to be about 2/3rd as fast as the PS5's, and bandwidth with respect to their architectures, will only be about half as much, though it could offer 10GB+ for games, which is pretty standard atm for current gen games.

Switch 2 is going to manage current gen much better than Switch did with last gen games. The jump is bigger, the technology is a lot newer, and the addition of DLSS has leveled the playing field a lot, not to mention Nvidia's edge in RT playing a factor. I'd suggest that Switch 2 when docked, if using mixed precision will be noticeably better than the Series S, but noticeably behind current gen consoles.

You’re wasting your time.

He still thinks this new console is no different to a Switch Lite or Switch OLED being introduced to the Switch family line.

Totally different GPU architecture, and because it also a different software stack, there is very little in the way of useful apples-to-apples benchmarks. Especially not informative to the average public - “plays games as well as an iPad” doesn’t help most people visualize what it can do.A lot of times we are comparing the NG Switch with the Steam Deck, which makes sense consider they are both dedicated gaming hardware with similar form factors.

But considering they are all ARM, has anyone done any productive spec and graphical power comparisons of NG Switch with M1 in iPad and MacBooks and/or Meta Quest 2 and 3?

Nintendo, specifically? Hard to say. Greater than 0% but less than 100%.Hey Nate, in your opinion what are the odds Nintendo is talking about the next device in private at Gamescom?

Industry chatter will be taking place, for certain. I have little birds waiting to sing their song to me once Gamescom concludes. Such songs may be kept under frie-NDA.

Glad someone got the reference.

That's probably all it will have tbhFor me personally, as long as the Next Switch has at least DLSS 2.0 i think we will be fine

God I love this thread so much. Always learning cool shit.This post is a great explainer, but there are some fundamental differences between denoising and antialiasing that are worth clarifying. For example, I wouldn't say that anti-aliasing is deleting "real" detail. Both noise and aliasing are sampling artifacts, but their origin is very different. I'll elaborate on why:

Spatial frequency

Just like how signals that vary in time have a frequency, so do signals that vary in space. A really simple signal in time is something like sin(2 * pi * t). It's easy to imagine a similar signal in 2D space: sin(2 * pi * x) * sin(2 * pi * y). I'll have Wolfram Alpha plot it:

The important thing to notice here is that the frequency content in x and y separable. You could have a function that has a higher frequency in x then in y, like sin(5 * pi * x) * sin(2 * pi * y):

So just like time frequency has dimensions 1/[Time], spatial frequency is a 1-dimensional concept with dimensions 1/[Length]. The way the signal varies with x and the way it varies with y are independent. That's true in 1D, 2D, 3D... N-D, but we care about 2D because images are 2D signals.

What is aliasing, really?

Those sine functions above are continuous; you know exactly what the value is at every point you can imagine. But a digital image is discrete; it's made up of a finite number of equally spaced points. To make a discrete signal out of a continuous signal, you have to sample each point on the grid. If you want to take that discrete signal back to a continuous signal, then you have to reconstruct the original signal.

Ideally, that reconstruction would be perfect. A signal is called band-limited if the highest frequency is finite. For example, in digital music, we think of most signals as band-limited to the frequency that the human ears can hear, which is generally accepted to be around 20,000 Hz. A very important theory in digital signal processing, called the Nyquist-Shannon theorem, says that you can reconstruct a band-limited signal perfectly if you sample at more than twice the highest frequency in the signal. That's why music with a 44 kHz sampling rate is considered lossless; 44 kHz is more than twice the limit of human hearing at 20 kHz, so the audio signal can be perfectly reconstructed.

When you sample at less than twice the highest frequency, it's no longer possible to perfectly reconstruct the original signal. Instead, you get an aliased representation of the data. The sampling rate of a digital image is the resolution of the sensor, in a camera, or of the display in a computer-rendered image. This sampling rate needs to be high enough to correctly represent the information that you want to capture/display; otherwise, you will get aliasing.

By the way, this tells us why we get diminishing returns with increasing resolution. Since the x and y components of the signal are separable, quadrupling the "resolution" in the sense of the number of pixels (for example, going from 1080p to 2160p) only doubles the Nyquist frequency in x and in y.

So why does aliasing get explained as "jagged edges" so often? Well, any discontinuity, like a geometric edge, in an image is essentially an infinite frequency. With an infinite frequency, the signal is not band-limited, and there's no frequency that can satisfy the Nyquist-Shannon theorem. It's impossible to get perfect reconstruction. (https://pbr-book.org/3ed-2018/Sampling_and_Reconstruction/Sampling_Theory) But you can also have aliasing without a discontinuity, when the spatial resolution is too low to represent a signal (this is the reason why texture mipmaps exist; lower resolution mipmaps are low-pass filtered to remove high frequency content, preventing aliasing).

You can even have temporal aliasing in a game, when the framerate is too low to represent something moving quickly (for example, imagine a particle oscillating between 2 positions at 30 Hz; if your game is rendering at less than 60 fps, then by the Nyquist-Shannon theorem, the motion of the particle will be temporally aliased).

So what do we do to get around aliasing?

The best solution, from an image quality perspective, is to low-pass filter the signal before sampling it. Which yes, does essentially mean blurring it. For a continuous signal, the best function is called the sinc function, because it acts as a perfect low pass filter in frequency space. But the sinc function is infinite, so the best you can do in discrete space is to use a finite approximation. That, with some hand-waving, is what Lanczos filtering is, which (plus some extra functionality to handle contrast at the edges and the like) is how FSR handles reconstruction. Samples of the scene are collected in each frame, warped by the motion vectors, then filtered to reconstruct as much of the higher frequency information as possible.

The old-school methods of anti-aliasing, like supersampling and MSAA, worked similarly. You take more samples than you need (in the case of MSAA, you do it selectively near edges), then low-pass filter them to generate a final image without aliasing. By the way, even though it seems like an intuitive choice, the averaging filter (e.g. taking 4 4K pixels and averaging them to a single 1080p pixel) is actually kind of a shitty low-pass filter, because it introduces ringing artifacts in frequency space. Lanczos is much better.

An alternative way to do the filtering is to use a convolutional neural network (specifically, a convolutional autoencoder). DLDSR is a low-pass filter for spatial supersampling, and of course, DLSS does reconstruction. These are preferable to Lanczos because, since the signal is discrete and not band-limited, there's no perfect analytical filter for reconstruction. Instead of doing contrast-adaptive shenanigans like FSR does, you can just train a neural network to do the work. (And, by the way, if Lanczos is the ideal filter, then the neural network will learn to reproduce Lanczos, because a neural network is a universal function approximator; with enough nodes, it can learn any function.). Internally, the convolutional neural network downsamples the image several times while learning relevant features about the image, then you use the learned features to reconstruct the output image.

What's different about ray tracing, from a signal processing perspective?

(I have no professional background in rendering. I do work that involves image processing, so I know more about that. But I have done some reading about this for fun, so let's go).

When light hits a surface, some amount of it is transmitted, and some amount is scattered. To calculate the emitted light, you have to solve what's called the light transport equation, which is essentially an integral over some function that describes how the material emits light. But in most cases, this equation does not have an exact, analytic solution. Instead, you need to use a numerical approximation.

Monte Carlo algorithms numerically approximate an integral by randomly sampling over the integration domain. Path tracing is the application of a Monte Carlo algorithm to the light transport equation. Because you are randomly sampling, you get image noise, which converges with more random samples. But if you have a good denoising algorithm, you can reduce the number of samples for convergence. Unsurprisingly, convolutional autoencoders are also very good at this (because again, universal function approximators). Again, I'm not in this field, but I mean, Nvidia's published on it before (https://research.nvidia.com/publica...n-monte-carlo-image-sequences-using-recurrent). It's out there!

And yes, you can have aliasing in ray-traced images. If you took all the ray samples from the same pixel grid, and you happen to come across any high-frequency information, it would be aliased. So instead, you can randomly distribute the Monte Carlo samples, using some sampling algorithm (https://www.pbr-book.org/3ed-2018/Monte_Carlo_Integration/Careful_Sample_Placement).

Once you have the samples, DLSS was already very similar in structure to a denoising algorithm. If, for example, the Halton sampling algorithm (https://pbr-book.org/3ed-2018/Sampling_and_Reconstruction/The_Halton_Sampler) for distributing Monte Carlo samples sounds familiar, it's because it's the algorithm that Nvidia recommends for subpixel jittering in DLSS. So temporal upscalers like DLSS already exploit random distribution to sample and reconstruct higher frequency information. So it makes sense to combine the DLSS reconstruction passes for rasterized and ray traced samples because, in many ways, the way the data are structured and processed is very similar.

tl;dr

Aliasing is an artifact of undersampling a high-frequency signal. Good anti-aliasing methods filter out the high frequency information before sampling to remove aliasing from the signal. Temporal reconstruction methods, like DLSS and FSR, use randomly jittered samples collected over multiple frames to reconstruct high frequency image content.

Noise in ray tracing is an artifact of randomly sampling rays using a Monte Carlo algorithm. Instead of taking large numbers of random samples, denoising algorithms attempt to reconstruct the signal from a noisy input.

ray reconstruction will be there as well. there's no need for reflex in a dedicated system and we already know frame gen is off the cardsThat's probably all it will have tbh

Which will be great and by the time a pro revision occurs, they should be up to DLSS 4.0 or something by that timeThat's probably all it will have tbh

Is it actually?ray reconstruction will be there as well. there's no need for reflex in a dedicated system and we already know frame gen is off the cards

Drake is an ampere card, so that already rules it out. even if it was Lovelace, to make frame gen work, you need to have a good deal of head room. the reason you can get such high frame rates with frame gen is because you're not working your gpu as hard with DLSS, giving the gpu time to create those new intermediate frames. if you're already running at peak usage, you don't have enough spare performance to create those new frames. the problem gets worse the lower down the performance stack you goIs it actually?

Well yeah, FFXVI is still fresh in a lot of peoples' mindsGlad someone got the reference.

God I love this thread so much. Always learning cool shit.

And Nvidia Reflex forces less-than-full GPU usage in order to keep latency down, which makes it an even poorer fit for a GPU strapped environment. Frame gen - at least the current iteration - just isn't a good fit.Drake is an ampere card, so that already rules it out. even if it was Lovelace, to make frame gen work, you need to have a good deal of head room. the reason you can get such high frame rates with frame gen is because you're not working your gpu as hard with DLSS, giving the gpu time to create those new intermediate frames. if you're already running at peak usage, you don't have enough spare performance to create those new frames. the problem gets worse the lower down the performance stack you go

Yeah and?It’s slowing down because of hitting saturation, not because it’s losing engagement.

Because it would be a very bad shortsighted move to do.I think people are overestimating how much of the Switch userbase will really NEED to play Mario Kart and Animal Crossing and such in 4K with better graphics. And willing to pay another $400 any time soon to do it. So why not cater to them for another 5-6 years?

Because putting their games on the old console would deter people from buying the new one, and again, they NEED people to buy the new one since the old one is on it's way out.But why, though.

I can see them stop making TX1+ hardware by 2026, sure. But why stop putting most of their big games on them?

It will be no more than 3 years, and it will only be small releases and remake/remasters. The next 3DMario/Mario Kart/Zelda/Smash/Animal Crossing/Splatoon/Pokemon will not release on Switch 1.Even the people who don’t like what I’m saying will agree that most Nintendo games will appear on the OLED/Lite for at least 3 years after this new model releases.

Huh... The Wii U was a home console, the Switch is handheld.The Wii U -> Switch power differential was relatively minimal...yet we knew it was being treated as a gen breaking successor and knew it was completely replacing and supplanting the Wii U. Similar to knowing the Series S was completely supplanting the Xbox One X despite not having huge differentials.

In this particular Switch situation, we have none of that. It would be the same exact team and development making a low end profile and then using the new hardware to make a high end profile, they don’t have to have focus on two different versions from being built from the ground up separately.

But i would suppose Nintendo will use it how it appears to be designed. Render Switch games at their OLED TX1+ profiles and use the power of the new SoC to output it at much higher resolutions than they can now and giving their games far better performance than they can squeeze out now with extra headroom to up the visual IQ to ps4pro/One X levels.

It will be like modern pc development except Nintendo is only optimizing for two profiles.

And most importantly, it’s the same base architecture that’s in the new hardware the new hardware whose design is specifically geared to run low profile games and output them high profile.

You’d be leaving so much performance on the tableRender Switch games at their OLED TX1+ profiles and use the power of the new SoC to output it at much higher resolutions than they can now and giving their games far better performance than they can squeeze out now with extra headroom to up the visual IQ to ps4pro/One X levels.

We all agree this is what will be done with the eventual Metroid Prime 4 release, yes? They are going to take the game they have been developing on the Tx1+ and use the power of t239 to make it look and run a lot better for that machine.

...off to the speed of light? Surrender now or prepare to fight?This best not happen. Any channel that does so... I'll blast.

Let‘s say that structure looks more private from this perspective than it actually is. Sega on the other hand is a Fort.Looks like the exact structure you'd want to place on the convention floor if you wanted a sealed and private space where Ninty can whisper Drake specs to developers

Because Sega doesn't want people to see them showing off the Dreamcast 2.Let‘s say that structure looks more private from this perspective than it actually is. Sega on the other hand is a Fort.

Nintendo, specifically? Hard to say. Greater than 0% but less than 100%.

Industry chatter will be taking place, for certain. I have little birds waiting to sing their song to me once Gamescom concludes. Such songs may be kept under frie-NDA.

More specifically, it's adding unnecessary performance to a device that is so far outside the scope of this proposed "Switch games but at higher res" positioning that such a device with a T239 would be an active and egregious waste of money. And no one should be of the illusion that any shareholder would consider that a good thing, when it's easy to identify that there are much cheaper and far more power-efficient options than what T239 is being designed to be capable of.You’d be leaving so much performance on the table

Nintendo, specifically? Hard to say. Greater than 0% but less than 100%.

Industry chatter will be taking place, for certain. I have little birds waiting to sing their song to me once Gamescom concludes. Such songs may be kept under frie-NDA.

This is absolutely fair, but lets look at the complete context here. The pre-switch speculation was based on Nate's reporting of Pascal based 16nm Tegra, Tegra X1 was ultimately not that chip. However, I didn't take into account mixed precision, because at the time no one really expected developers to utilize it. The Switch has 393GFLOPs of FP32 performance, but developers utilize up to 600GFLOPs from the Nintendo Switch thanks to mixed precision.Yeah but you get the mobile tflops by halving the docked tflops in general terms. Do people really expect Switch 2 to be 3x the current Switch's docked mode in handheld form?... That line of thinking is wishful thinking imo when you consider they have shown time and time again they value battery life over even at times running games at 360p-480p resolution in handheld mode. An issue I rarely see people talk about is active cooling and the costs involved in cooling a chip running at one third to two thirds the wattage over a chip like Tegra X1 which only need a tiny, tiny fan.

@mjayer

I absolutely adore @Z0m3le but let's be real if you followed him during the pre Switch and Switch Pro speculation years he has consistently massively overshot in terms of how high Nintendo will clock their chipsets thus leading to massively reduced performance in relation to his expectations.

I 100% think Switch 2 will be massively more powerful than the current Switch but starting to compare it to current gen machines with good desktop class CPU's, their massive fast RAM pools, their ridiculously fast SSD's and their GPU's (which are still in a best case scenario going to be 3-5x the performance of a best case scenario Switch 2 GPU) is just setting yourself up for disappointment imo.

Switch 2 will be around top end Switch level visuals with more complex geometry, better textures, better quality lighting and models while running at much higher resolutions due mainly to DLSS and more stable framerates while again getting about 20-25% of the latest and greatest big AAA third party games. It's not going to be the N64 to Gamecube leap some think. it's going to be the PS4 to PS5 leap which causes arguments and the more core audience asking "is this it, is this next gen?" when it comes to visuals in games like Starfield or Spider-Man 2 (I think both look phenomenal personally and go for aims not necessarily to do with visuals but either speed of traversal or scale unseen before in the AAA space).

Will this please the hardcore among us? probably not but is it enough to please the 100+ million consumers that Nintendo will want to capture with the device?... of course.

Same thing with NSO games running on Switch, so much wasted power.You’d be leaving so much performance on the table

What you are saying here makes sense for a wide variety of Nintendo's Software, but you have to remember that the GPU isn't just 9 years newer than Tegra X1 and has features like DLSS and Ray Tracing bolted down to the fundamental design of the chip, it's also a GPU that is 6 times bigger than the Tegra X1, on a process node that is much much better than any current Ampere chip's 8nm Samsung node. DLSS is enough to get the results you are talking about, even if the GPU wasn't more powerful, rendering a Switch game like Metroid Prime 4, even if that game runs at 720p docked on Switch, could run at 1440p on Switch 2 via DLSS even if the GPU wasn't more powerful, however it's at least SIX times more powerful than Tegra X1, has features like Variable rate shading that offers ~20% increase in performance and mesh shading that offers ~25% increase in performance... The CPU is also 3 times faster per clock and has at least a 50% higher clock and 133% more cores, that means the CPU is at minimum 10 times the performance, the RAM is 3 times larger and 4 times faster... There is a lot more than XB1/PS4 gen visuals going on here.Just wanted to say I’ve always loved reading your posts. Read them all. I’ve read this entire thread. I know full well what the speculated/expected hardware specs for this thing is. I get it.

I’m not arguing against the specs.

I’m arguing how new Switch hardware will be positioned by Nintendo.

Power differentials aren’t the be all, end all of how a console is positioned and treated.

The Wii U -> Switch power differential was relatively minimal...yet we knew it was being treated as a gen breaking successor and knew it was completely replacing and supplanting the Wii U. Similar to knowing the Series S was completely supplanting the Xbox One X despite not having huge differentials.

Likewise, large power differentials in spec doesn’t necessarily dictate that it must be a gen breaking successor either.

T239 seems like a fantastic mobile DLSS machine, right? It seems optimized to efficiently and competently run AI DLSS and some light RT on their respective cores at extremely minimal clocks and power draws.

This is amazing.

But i would suppose Nintendo will use it how it appears to be designed. Render Switch games at their OLED TX1+ profiles and use the power of the new SoC to output it at much higher resolutions than they can now and giving their games far better performance than they can squeeze out now with extra headroom to up the visual IQ to ps4pro/One X levels.

Right?

It will be like modern pc development except Nintendo is only optimizing for two profiles. Starfield, for example, is releasing to minimum specs of a gpu from

2016 and a cpu from 2015. People will also be playing it on their rtx4090 and 12 core cpu from 2022. It’s fundamentally the same game.

Why wouldn’t Nintendo approach their game development be like this for most their games over the next 5 years or so?

We all agree this is what will be done with the eventual Metroid Prime 4 release, yes? They are going to take the game they have been developing on the Tx1+ and use the power of t239 to make it look and run a lot better for that machine.

Now, if there is some out of the blue “gimmick” that we do not see coming that drives Nintendo to change the way we play their games and can only be done on the T239…then all bets are off.

I’m just going by what we know what the hardware is and what it’s designed to do and by what Nintendo has said concerning their devices and the future and the Switch.

I just want to say that I do appreciate your posts.This post is a great explainer, but there are some fundamental differences between denoising and antialiasing that are worth clarifying. For example, I wouldn't say that anti-aliasing is deleting "real" detail. Both noise and aliasing are sampling artifacts, but their origin is very different. I'll elaborate on why:

Spatial frequency

Just like how signals that vary in time have a frequency, so do signals that vary in space. A really simple signal in time is something like sin(2 * pi * t). It's easy to imagine a similar signal in 2D space: sin(2 * pi * x) * sin(2 * pi * y). I'll have Wolfram Alpha plot it:

The important thing to notice here is that the frequency content in x and y separable. You could have a function that has a higher frequency in x then in y, like sin(5 * pi * x) * sin(2 * pi * y):

So just like time frequency has dimensions 1/[Time], spatial frequency is a 1-dimensional concept with dimensions 1/[Length]. The way the signal varies with x and the way it varies with y are independent. That's true in 1D, 2D, 3D... N-D, but we care about 2D because images are 2D signals.

What is aliasing, really?

Those sine functions above are continuous; you know exactly what the value is at every point you can imagine. But a digital image is discrete; it's made up of a finite number of equally spaced points. To make a discrete signal out of a continuous signal, you have to sample each point on the grid. If you want to take that discrete signal back to a continuous signal, then you have to reconstruct the original signal.

Ideally, that reconstruction would be perfect. A signal is called band-limited if the highest frequency is finite. For example, in digital music, we think of most signals as band-limited to the frequency that the human ears can hear, which is generally accepted to be around 20,000 Hz. A very important theory in digital signal processing, called the Nyquist-Shannon theorem, says that you can reconstruct a band-limited signal perfectly if you sample at more than twice the highest frequency in the signal. That's why music with a 44 kHz sampling rate is considered lossless; 44 kHz is more than twice the limit of human hearing at 20 kHz, so the audio signal can be perfectly reconstructed.

When you sample at less than twice the highest frequency, it's no longer possible to perfectly reconstruct the original signal. Instead, you get an aliased representation of the data. The sampling rate of a digital image is the resolution of the sensor, in a camera, or of the display in a computer-rendered image. This sampling rate needs to be high enough to correctly represent the information that you want to capture/display; otherwise, you will get aliasing.

By the way, this tells us why we get diminishing returns with increasing resolution. Since the x and y components of the signal are separable, quadrupling the "resolution" in the sense of the number of pixels (for example, going from 1080p to 2160p) only doubles the Nyquist frequency in x and in y.

So why does aliasing get explained as "jagged edges" so often? Well, any discontinuity, like a geometric edge, in an image is essentially an infinite frequency. With an infinite frequency, the signal is not band-limited, and there's no frequency that can satisfy the Nyquist-Shannon theorem. It's impossible to get perfect reconstruction. (https://pbr-book.org/3ed-2018/Sampling_and_Reconstruction/Sampling_Theory) But you can also have aliasing without a discontinuity, when the spatial resolution is too low to represent a signal (this is the reason why texture mipmaps exist; lower resolution mipmaps are low-pass filtered to remove high frequency content, preventing aliasing).

You can even have temporal aliasing in a game, when the framerate is too low to represent something moving quickly (for example, imagine a particle oscillating between 2 positions at 30 Hz; if your game is rendering at less than 60 fps, then by the Nyquist-Shannon theorem, the motion of the particle will be temporally aliased).

So what do we do to get around aliasing?

The best solution, from an image quality perspective, is to low-pass filter the signal before sampling it. Which yes, does essentially mean blurring it. For a continuous signal, the best function is called the sinc function, because it acts as a perfect low pass filter in frequency space. But the sinc function is infinite, so the best you can do in discrete space is to use a finite approximation. That, with some hand-waving, is what Lanczos filtering is, which (plus some extra functionality to handle contrast at the edges and the like) is how FSR handles reconstruction. Samples of the scene are collected in each frame, warped by the motion vectors, then filtered to reconstruct as much of the higher frequency information as possible.

The old-school methods of anti-aliasing, like supersampling and MSAA, worked similarly. You take more samples than you need (in the case of MSAA, you do it selectively near edges), then low-pass filter them to generate a final image without aliasing. By the way, even though it seems like an intuitive choice, the averaging filter (e.g. taking 4 4K pixels and averaging them to a single 1080p pixel) is actually kind of a shitty low-pass filter, because it introduces ringing artifacts in frequency space. Lanczos is much better.

An alternative way to do the filtering is to use a convolutional neural network (specifically, a convolutional autoencoder). DLDSR is a low-pass filter for spatial supersampling, and of course, DLSS does reconstruction. These are preferable to Lanczos because, since the signal is discrete and not band-limited, there's no perfect analytical filter for reconstruction. Instead of doing contrast-adaptive shenanigans like FSR does, you can just train a neural network to do the work. (And, by the way, if Lanczos is the ideal filter, then the neural network will learn to reproduce Lanczos, because a neural network is a universal function approximator; with enough nodes, it can learn any function.). Internally, the convolutional neural network downsamples the image several times while learning relevant features about the image, then you use the learned features to reconstruct the output image.

What's different about ray tracing, from a signal processing perspective?

(I have no professional background in rendering. I do work that involves image processing, so I know more about that. But I have done some reading about this for fun, so let's go).

When light hits a surface, some amount of it is transmitted, and some amount is scattered. To calculate the emitted light, you have to solve what's called the light transport equation, which is essentially an integral over some function that describes how the material emits light. But in most cases, this equation does not have an exact, analytic solution. Instead, you need to use a numerical approximation.

Monte Carlo algorithms numerically approximate an integral by randomly sampling over the integration domain. Path tracing is the application of a Monte Carlo algorithm to the light transport equation. Because you are randomly sampling, you get image noise, which converges with more random samples. But if you have a good denoising algorithm, you can reduce the number of samples for convergence. Unsurprisingly, convolutional autoencoders are also very good at this (because again, universal function approximators). Again, I'm not in this field, but I mean, Nvidia's published on it before (https://research.nvidia.com/publica...n-monte-carlo-image-sequences-using-recurrent). It's out there!

And yes, you can have aliasing in ray-traced images. If you took all the ray samples from the same pixel grid, and you happen to come across any high-frequency information, it would be aliased. So instead, you can randomly distribute the Monte Carlo samples, using some sampling algorithm (https://www.pbr-book.org/3ed-2018/Monte_Carlo_Integration/Careful_Sample_Placement).

Once you have the samples, DLSS was already very similar in structure to a denoising algorithm. If, for example, the Halton sampling algorithm (https://pbr-book.org/3ed-2018/Sampling_and_Reconstruction/The_Halton_Sampler) for distributing Monte Carlo samples sounds familiar, it's because it's the algorithm that Nvidia recommends for subpixel jittering in DLSS. So temporal upscalers like DLSS already exploit random distribution to sample and reconstruct higher frequency information. So it makes sense to combine the DLSS reconstruction passes for rasterized and ray traced samples because, in many ways, the way the data are structured and processed is very similar.

tl;dr

Aliasing is an artifact of undersampling a high-frequency signal. Good anti-aliasing methods filter out the high frequency information before sampling to remove aliasing from the signal. Temporal reconstruction methods, like DLSS and FSR, use randomly jittered samples collected over multiple frames to reconstruct high frequency image content.

Noise in ray tracing is an artifact of randomly sampling rays using a Monte Carlo algorithm. Instead of taking large numbers of random samples, denoising algorithms attempt to reconstruct the signal from a noisy input.

I’m going to be frank, I’m not sure why you bring this point up repeatedly in the thread about not being confirmed or whatever along those lines.Reminder that T239 or any hardware details are not "known" or confirmed despite many posters stating as such, as correct as the leak was back then.

blog.playstation.com

blog.playstation.com

All I'm saying is that Nvidia cards will consume less power for similar performance (measured in FPS, not Flops). And is well known that the higher end models are less efficient given their higher clocks.I'm not sure this is saying what you are suggesting it is saying. Not that I'm calling you out, this is tricky stuff.

@Samuspet is talking about FLOPS per Watt. How much does it cost to go past 1 TFLOP? But that's not what this article is talking about - this is talking about benchmark performance per watt.

And the article isn't benchmarking architectures, it's benchmarking GPUs. That matters, because different cards in the same architecture might have radically different efficiencies. In general wider but slower designs are more power efficient than faster but narrower designs. You can build cards both way with either architecture.

Let's take a look at two cards that perform very similarly on the benchmark. The RX 6700 XT and the RTX 3070 have nearly identical benchmark results and power draw numbers. On paper, for this test, the cards look basically exactly the same.

But they have totally different designs. The RX 6700 XT is made up of 2560 RDNA 2 cores, running at 2.6 GHz. The RTX 3070 is made up of 5888 Ampere cores, running at 1.7 GHz. The RDNA 2 card is going for that traditionally inefficient narrow but fast design, and yet is totally keeping up with Ampere. This actually implies the opposite. The RDNA 2 is much more efficient than Ampere.

If you look at it per TFLOP, the way Samuspet was, it's even more stark. Every single RX 6000 series card absolutely stomps the RTX 30 equivalent in efficiency here.

Card Watts TFLOPS TFLOPS/Watt RTX 3070 219.3 20.31 0.0926128591 RTX 3060Ti 205.5 16.2 0.07883211679 RTX 3060 (12GB) 171.8 12.74 0.07415599534 RTX 3080 333 29.77 0.0893993994 RTX 3090 361 35.59 0.09858725762 RX 6800 235.4 32.33 0.1373406967 RX 6700xt 215.5 26.42 0.1225986079 RX 6900xt 308.5 46.08 0.1493679092 RX 6800xt 303.4 41.47 0.1366842452

You can also see that both sets of cards have lots of variation. Details in the way those cores are arranged and clocked can have huge implications for power consumption.

If we look at frames/Watt, what this benchmark was designed to do, we can see that the two arches are roughly equivalent.

Card Watts Frames Frames/W RTX 3070 219.3 116.6 0.5316917465 RTX 3060Ti 205.5 106.3 0.5172749392 RTX 3060 (12GB) 171.8 83.6 0.4866123399 RTX 3080 333 142.1 0.4267267267 RTX 3090 361 152.7 0.4229916898 RX 6800 235.4 130.8 0.5556499575 RX 6700xt 215.5 112 0.5197215777 RX 6900xt 308.5 148.1 0.4800648298 RX 6800xt 303.4 142.8 0.4706657877

But that begs an obvious question. If frames/watt are similar, but TFLOPS/watt are different, doesn't that imply that there is a difference in Frames/TFLOP? Yes it does.

Card TFLOPS Frames Frames/TFLOP RTX 3070 20.31 116.6 5.741014279 RTX 3060Ti 16.2 106.3 6.561728395 RTX 3060 (12GB) 12.74 83.6 6.562009419 RTX 3080 29.77 142.1 4.773261673 RTX 3090 35.59 152.7 4.290531048 RX 6800 32.33 130.8 4.045777915 RX 6700xt 26.42 112 4.239212718 RX 6900xt 46.08 148.1 3.213975694 RX 6800xt 41.47 142.8 3.443453099

The Ampere cards are stomping all over the face of the RDNA 2 cards, not in the number of TFLOPS, but their quality.

What the hell does all this mean?

The first thing to take away is that there is huge variation in these devices. There isn't one Rosetta stone that allows us to definitively determine which arch is more efficient, or more powerful, but X%, all the time every time.

The second is that the numbers are a bit deceiving. We get hung up on comparing TFLOPS to TFLOPS, but an RDNA2 TFLOP isn't an Ampere TFLOP... but from this chart we can see a 3060 TFLOP isn't a 3090 TFLOP!

My confirmation will be from the likes of Digital Foundry based on decided, confirmed final hardware, not from a data leak that looked to have been planned at some point in the past.TL;DR I don’t understand the point of your comment when the only direct confirmation comes from the company selling the product to you, but the company that sells the product never does that, therefore you’re waiting for information that will not exist, ever.

This would make more sense if the GPU was 1280 cuda core shaders TBH, on TSMC 4N, 1280 cuda cores @ 586MHz is enough to hit 1.5TFLOPs, with Drake, the portable clock would have to be 489MHz to hit 1.5TFLOPs. Thraktor put the clock at 550MHz to 600MHz, which is 1.69TFLOPs to 1.84TFLOPs for Drake, both are much more reasonable, but when we look at what Nintendo actually did with Switch's portable clocks, we see that they pushed the TX1 all the way to 460MHz on a 2015 2D transistor 20nm (flawed power bleeding) process node, it wasn't the best for battery life, it was what their target performance was. The reason I believe that the DLSS test reveals the clocks, is because, well... those clocks have no reason to be there, and the power consumption naming, does seem to line up closely with estimations of what Ampere would draw with Drake's configuration on TSMC 4N.Because Steam Deck is 1.6 and Steam Deck so Big. And because we talked about how much pushing it 1TFLOP would be for so long in the 8nm days.

For the record, I don't think 1.5 TFLOP is where we'll land. @Thraktor is right that 1.5 TFLOP is maximum efficiency, but max efficiency isn't max battery life. And 3 TFLOPS seems like the docked max before the GPU starts to get bottlenecked across the board. So I wouldn't be surprised to find something a little shy of that in both modes.

The difference between 400MHz GPU and 500MHz GPU is yawn. It's not that it doesn't matter - it's a 25% increase in perf - but it's not fundamental. Especially with so many other aspects of the system seemingly in place.

My confirmation will be from the likes of Digital Foundry based on decided, confirmed final hardware, ot from a data leak that looked to have been planned at some point in the past.

Reason I've posted this more than once is that people keep saying "we know", "we know", "we know".

You don't know anything at this moment in time.

I think there's a good chance it will be T239-based and I'll be very happy if it is (assuming no crippled aspects) but I (and everyone or almost, almost everyone here) don't know.

Just wanted to say I’ve always loved reading your posts. Read them all. I’ve read this entire thread. I know full well what the speculated/expected hardware specs for this thing is. I get it.

I’m not arguing against the specs.

I’m arguing how new Switch hardware will be positioned by Nintendo.

Power differentials aren’t the be all, end all of how a console is positioned and treated.

The Wii U -> Switch power differential was relatively minimal...yet we knew it was being treated as a gen breaking successor and knew it was completely replacing and supplanting the Wii U. Similar to knowing the Series S was completely supplanting the Xbox One X despite not having huge differentials.

Likewise, large power differentials in spec doesn’t necessarily dictate that it must be a gen breaking successor either.

T239 seems like a fantastic mobile DLSS machine, right? It seems optimized to efficiently and competently run AI DLSS and some light RT on their respective cores at extremely minimal clocks and power draws.

This is amazing.

But i would suppose Nintendo will use it how it appears to be designed. Render Switch games at their OLED TX1+ profiles and use the power of the new SoC to output it at much higher resolutions than they can now and giving their games far better performance than they can squeeze out now with extra headroom to up the visual IQ to ps4pro/One X levels.

Right?

It will be like modern pc development except Nintendo is only optimizing for two profiles. Starfield, for example, is releasing to minimum specs of a gpu from

2016 and a cpu from 2015. People will also be playing it on their rtx4090 and 12 core cpu from 2022. It’s fundamentally the same game.

Why wouldn’t Nintendo approach their game development be like this for most their games over the next 5 years or so?

We all agree this is what will be done with the eventual Metroid Prime 4 release, yes? They are going to take the game they have been developing on the Tx1+ and use the power of t239 to make it look and run a lot better for that machine.

Now, if there is some out of the blue “gimmick” that we do not see coming that drives Nintendo to change the way we play their games and can only be done on the T239…then all bets are off.

I’m just going by what we know what the hardware is and what it’s designed to do and by what Nintendo has said concerning their devices and the future and the Switch.

Heck, even if it’s treated and positioned exactly like a ps5 and it’s made clear it’s a gen breaking next gen console successor as it’s clear the ps5 was for the ps4/pro…you will at least agree the “cross gen” support for the OLED and Lite will be much longer than it is with the ps4 and ps4 pro…right?

Reminder that T239 or any hardware details are not "known" or confirmed despite many posters stating as such, as correct as the leak was back then.

TL;DR I don’t understand the point of your comment when the only direct confirmation comes from the company selling the product to you, but the company that sells the product never does that, therefore you’re waiting for information that will not exist, ever.

My confirmation will be from the likes of Digital Foundry based on decided, confirmed final hardware, ot from a data leak that looked to have been planned at some point in the past.

Reason I've posted this more than once is that people keep saying "we know", "we know", "we know".

You don't know anything at this moment in time.

I think there's a good chance it will be T239-based and I'll be very happy if it is (assuming no crippled aspects) but I (and everyone or almost, almost everyone here) don't know.

In this case, the bolded is wrong, while Nintendo could have worked with other vendors for possible hardware solutions, Nvidia's Tegra and Ampere lines were completely exposed in that hack, there is no other Ampere or Ada based SoC being worked on, The entire Ampere GPU stack only has 2 SoCs, the GA10B (Orin) and the GA10F (Drake), Orin actually fills the entire customer stack from 45+watts down to 5 watts, there is no room for a second public Ampere Tegra solution, especially not one coming in 2024, and in 2025 they will have publicly moved on to Thor.I think you're both right.

We know for sure T239 exists, both from the Nvidia leak and Linux commits.

We know there was an in-flux NVN2 implementation for it at some point.

In the absence of anything pointing otherwise, thinking T239 is our chip is a good assumption.

That chip represented a pretty big investment that would otherwise be lost as Nvidia hasn't announced anything that would use it.

But it's still only an assumption.

I think Nintendo is known to work on multiple concurrent hardware.

They very probably brought multiple solutions to the prototype stage.

Only the chip of one of those solutions leaked.

Nvidia could have worked on it by itself eyeing multiple customers, both handheld and laptops, and NVN2 was just part of the pitch to Nintendo.

We'll only know for sure around announcement when Eurogamer or others will publish the exact specs.

I, for one, will keep assuming T239 is our guy.

Hell, I'm happy with the current Switch for the most part and to have something significantly more powerful now too?Nor should anyone else be.

I'm not claiming they could have cancelled T239 last year and invested in a new chip.Dev kits for Switch 2 started early this year and by summer all major 3rd parties have Switch 2 devkits, there just isn't time for another chip to be designed and produced between February 2022 and early this year, for devkits to not have T239 in it, and certainly not enough time for Nintendo to feel comfortable giving all major 3rd parties these devkits as of July this year.

I agree.Switch 2 is beyond a shadow of a doubt, powered by T239 or isn't produced by Nvidia.

I don't see a world where we drop [Series] S. In terms of parity [...] I think that's more that the community is talking about it. There are features that ship on X today that do not ship on S, even from our own games, like ray-tracing that works on X, it's not on S in certain games. So for an S customer, they spent roughly half what the X customer bought, they understand that it's not going to run the same way.

I want to make sure games are available on both, that's our job as a platform holder and we're committed to that with our partners. [...] Having an entry level price point for console, sub-$300, is a good thing for the industry. I think it's important, the Switch has been able to do that, in terms of kind of the traditional plug-into-my-television consoles. I think it's important. So we're committed.

I meant 3x the current Switch GPU's docked performance for Switch 2's handheld GPU performance.If it can't do that 7+ years later, someone was sleeping at the wheel.

I'm not sure I understand this point. What is the the other possibilities if it isn't T239 or just not nvidia? I think this is taking devil's advocate to an extreme for no reason but to "cover all bases,"As I said, I'm assuming T239, as most of us, and we are probably right.

But until the definitive specs are out, 5% of doubt remains.

We know for a fact that Nintendo marketed the Wii U to developers as a "360+" device, they literally set their target to be better than the 360 in GPU performance, them doing the same with PS4 now makes a lot of sense, so targeting 1843GFLOPs or 2TFLOPs fits more with what Nintendo has done in the past than a 1.5TFLOPs. On top of this reasoning, I think Nvidia just have egos, they want to release something that is better than the PS4 in raw performance, when they showed of TX1, they showed it off with the same demo PS4 used in it's showcase, this is the type of thing Nvidia just cares about.

CP2077 IS a last gen title, though.If I may be so bold, PS4 is small fry for Nvidia. I can totally see them using a pc title that has been optimised to hell and back to use their technology as a showcase piece for Nintendo to show how close it gets to PS5.

That title IMO would be cyberpunk 2077, it's been day and date getting nvidia tech updates, and I think a Drake version could be a good candidate for a switch RTX and DLSS showcase, especially with how awful the game was on PS4. The devs seem to have a good relationship with Nintendo as well and I can see huge sales potential for such a game as a Drake launch title.

Now do we really think Nintendo are going to hit a 9x leap in GPU compute

This would've had weight if Xbox hardware wasn't on the decline.Eurogamer interviewed Phil Spencer, and his answer regarding Series S may be relevant to our hardware discussion:

The Lite model, despite its lower sales figure, plays a similar strategic role. Until Nintendo is able to release a low-cost NG Lite, it will most likely remain in the product lineup. I understand that some want Nintendo to drop support for the OG to incentivize users to move on, but personally I don’t think that’d be the company’s strategy.

- Spencer claimed that there’s no Series S parity requirement, and implied that it’s only a fan theory.

- MS is committed to supporting Series S because it’s important to have a low entry price point.

Because a low entry point is crucial (not to mention 129MM install base), Nintendo probably will maintain a steady cross-gen output for the OG models until the Lite NG comes out. As Spencer suggested, certain games may have to cut features on the OG (and run like a potato), but customers understand that they have an old console. This itself is an incentive for the users to upgrade, without outright cutting off the install base.