RTX 4060 reviews have appeared today, and they provide some useful insight into how DLSS 3's frame generation feature runs on mid-range GPUs. Unfortunately not that many reviews have actually focussed on DLSS FG, but

Ars Technica's review has comparisons between DLSS off and DLSS with and without frame generation across 4 games, all at 1440p resolution, which gives us something to work with.

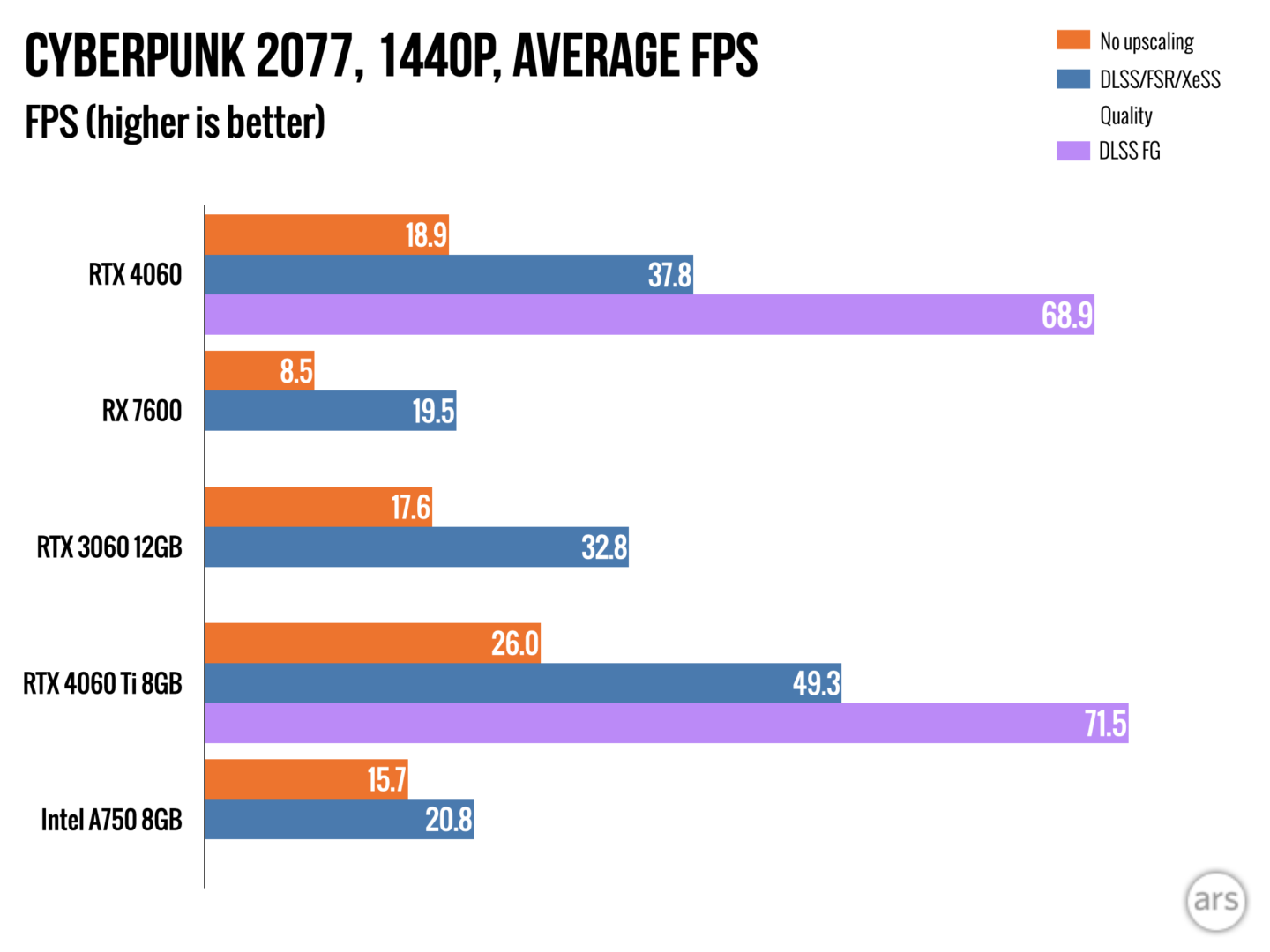

One interesting thing about the numbers is that the performance with frame generation enabled doesn't increase much as the baseline performance of the game increases. Here's Cyberpunk 2077, the best case scenario for DLSS FG:

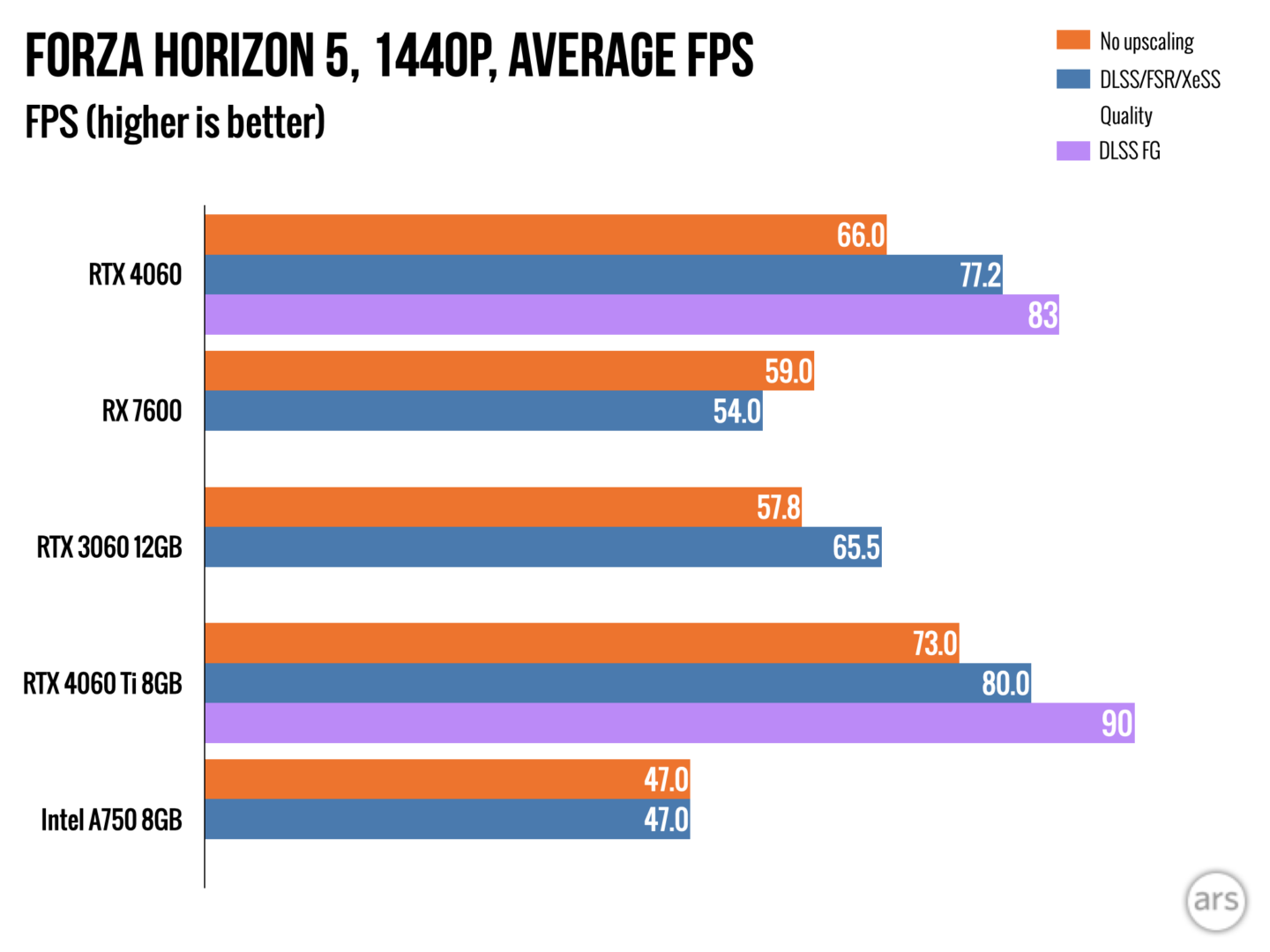

The game gets almost a 2x speedup from frame generation over standard DLSS, along the lines of what Nvidia advertised. Here's Forza Horizon 5:

Despite running at a much higher base frame rate, the benefits of turning on frame generation are almost non-existent. The performance with DLSS FG seems to plateau around 80-90 FPS, with the highest frame rate of 92 FPS on Hitman 3.

The key thing here is that DLSS 3's frame generation isn't free. If it could instantaneously generate a new frame every time, then the frame rate with frame generation would always be double the frame rate without. The more cost to generating the frame (ie the more time it takes to generate) the lower the benefits of DLSS FG, up to the point where it takes longer than rendering a frame does, and it would actually harm performance. We're obviously not getting a uniform doubling of the frame rate, and in some cases not even a 10% boost in performance, which gives us a hint about the cost of frame generation.

There are obviously quite a few potential bottlenecks which could be impacting the performance here, the key ones being the tensor core performance, memory bandwidth and the OFA. I don't think the OFA is the bottleneck in this case, as it appears to be identical across the Ada lineup and the RTX 4090 can achieve much higher frame rates with FG. The bandwidth could well be a significant bottleneck, as there's a lot of data being accessed, but taking the cache into account it would be hard to quantify. That leaves tensor core performance, which is easy enough to quantify, and likely a bottleneck in at least some cases.

Let's say, for simplicity, that DLSS 3's FG algorithm is purely bottlenecked by tensor core performance. At 1440p on an RTX 4060, there seems to be enough tensor core performance to produce around 46 generated frames a second for an output of 92 FPS. Maybe it could squeeze a bit more out in certain circumstances, so let's just say 50 generated frames a second for 100 FPS. I'm not quite sure whether they're generated concurrently with the regular frames or sequentially, but it doesn't really matter that much, as the same would be true on any other hardware.

The RTX 4060 is twice the size of T239's GPU, and runs at around 2.5x the speed I'd expect in docked mode, so it has around 5x the overall tensor core performance. If the RTX 4060 is limited by tensor core performance to 100 FPS using frame generation at 1440p, then on hardware with 1/5th the performance it would be limited to around 20 FPS. Outputting at 4K, we might get as little as 10 FPS.

Obviously this is an over-simplified extrapolation based on limited data, but I'd be surprised if it was that far from the truth. I've seen a lot of discussion over whether T239 could have enough OFA performance for DLSS frame generation, but honestly that was never going to be the bottleneck and the the overall level of performance (and memory bandwidth) required to generate a frame quickly enough is a much bigger issue. In fact, from what I can tell even the RTX 4060 might struggle with hitting 60 FPS at 4K using frame generation. Unfortunately I can't find any reviews which compare 4K performance with frame generation to DLSS without frame generation, and the reviews which do test frame generation at 4K (see

here and

here) show results which are pretty much what you'd expect from regular DLSS 2. I wouldn't be surprised if the Nvidia drivers turn frame generation off at higher frame rates when it detects that it wouldn't improve performance.