-

Hey everyone, staff have documented a list of banned content and subject matter that we feel are not consistent with site values, and don't make sense to host discussion of on Famiboards. This list (and the relevant reasoning per item) is viewable here.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

StarTopic Future Nintendo Hardware & Technology Speculation & Discussion |ST| (Read the staff posts before commenting!)

- Thread starter Dakhil

- Start date

Threadmarks

View all 18 threadmarks

Reader mode

Reader mode

Recent threadmarks

Poll #3: When do you think is the launch window for Nintendo's new hardware? Announcement regarding links to news and rumours from 2022 and 2021 Rough summary of the 2 August 2023 episode of Nate the Hate Rough summary of the 11 September 2023 episode of Nate the Hate Anatole's deep dive into a convolutional autoencoder Differences between T234 and T239 Rough summary of the 19 October 2023 episode of Nate the Hate necrolipe's Twitter (X) post on why a 2025 launch doesn't automatically make T239 outdated based on one of the leaked slides from Microsoft

D

Deleted member 2

Guest

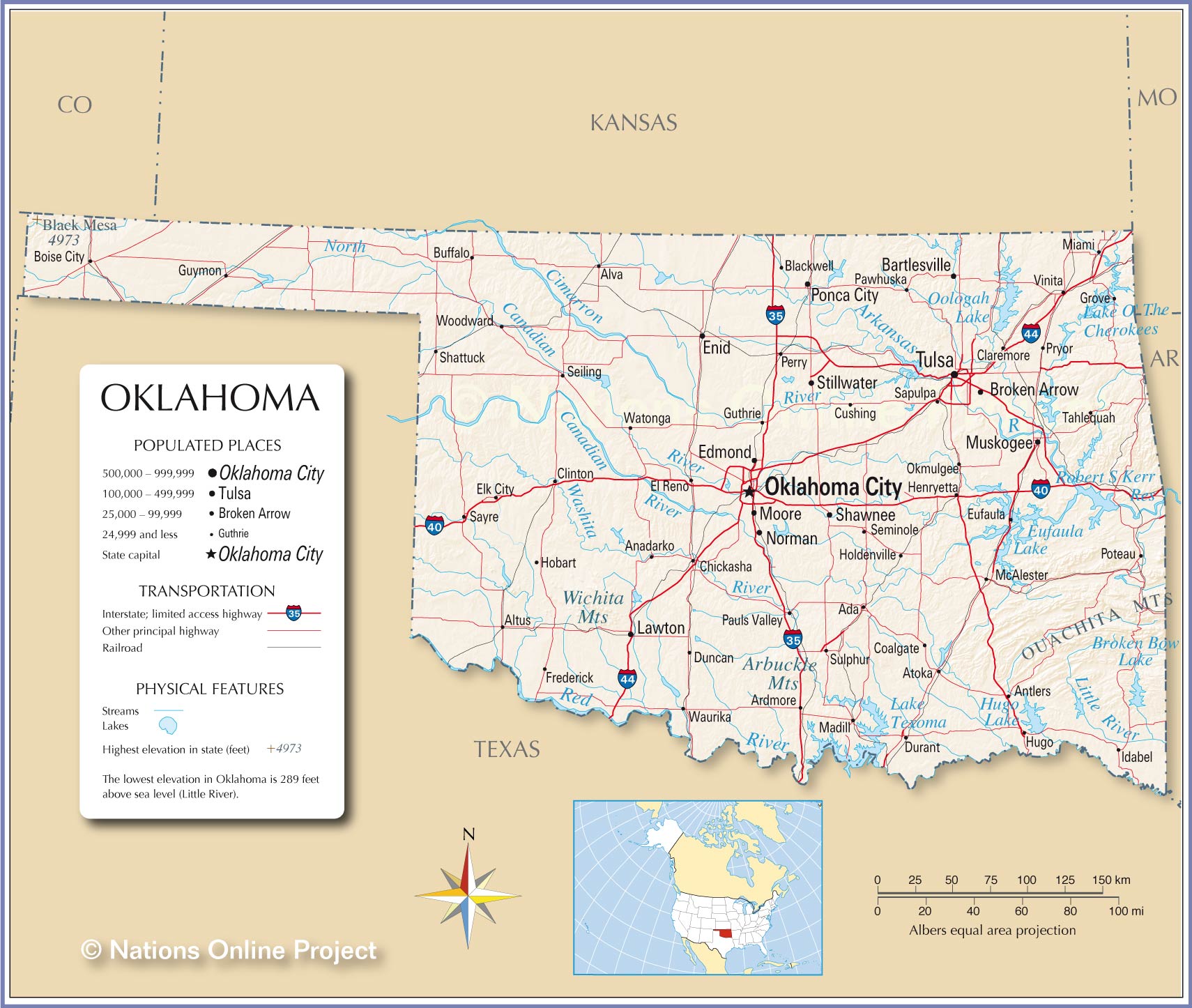

Looks like Oklahoma to me

ItWasMeantToBe19

Manakete

The only game console originally released in the middle of the year (April to August) in the last 34 years is the N64 due to Mario 64 delays.

It's possible the Switch 2 breaks this trend, but I would expect September 2024.

It's possible the Switch 2 breaks this trend, but I would expect September 2024.

darthdiablo

+5 Death Stare

- Pronouns

- he/him

Here's the Eurogamer article on NX, before official Nintendo reveal.Yeah… no. Eurogamer have leaked the exact specs of Xbox One, PS4, PS4 Pro, Xbox One X, Switch, Xbox One X and PS5 all way ahead of their official releases.

I don't think exact specs were posted anywhere in that article.

Goodtwin

Like Like

Unless Nintendo lied to investors, this thing isn't coming out before April 1, 2024.

Nintendo said that no new hardware was part of the 15 million forecast for the fiscal year. They never said that new hardware wouldn't come out in the fiscal year, only that it wasn't a factor for the 15 million forecasted Switch sales. This has been misreported over and over again, but it doesn't change was was actually said.

qwerp

Moblin

A lot of those reports are just speculation. The only decent one was MoneyDJ, which was months ago.User fwd-bwd has a post where they collected reports, rumors and what not for when it could possibly release, maybe you find that handy, there's been a few rumors about H1. I'm to lazy to look for it though.

Which, btw. is also my point of view, i'm not saying it's definitely releasing March 2024, i'm saying H1. I just said that outside of launch or Switch January 2017 like showcase, there's nothing else that would make sense in March 2024.

Concernt

Optimism is non-negotiable

- Pronouns

- She/Her

And yet they still got the OLED Model leaks wrong, refuse to comment on Tegra T239, etc.Yeah… no. Eurogamer have leaked the exact specs of Xbox One, PS4, PS4 Pro, Xbox One X, Switch, Xbox One X and PS5 all way ahead of their official releases.

Leaks are the exception. Not the rule.

Plus. We DO have leaked clocks already, so, irrelevant. Even if they are in-dev clocks, so was Eurogamer's reporting- ah, and that makes much of their reporting "not exact", because they aren't and weren't, and that's OK.

You know what DOES have some precise specs for T239?

This thread!

qwerp

Moblin

The digital foundry video linked in the article talks about specs.Here's the Eurogamer article on NX, before official Nintendo reveal.

I don't think exact specs were posted anywhere in that article.

qwerp

Moblin

When did eurogamer report on the OLED model?And yet they still got the OLED Model leaks wrong, refuse to comment on Tegra T239, etc.

Leaks are the exception. Not the rule.

Plus. We DO have leaked clocks already, so, irrelevant. Even if they are in-dev clocks, so was Eurogamer's reporting- ah, and that makes much of their reporting "not exact", because they aren't and weren't, and that's OK.

You know what DOES have some precise specs for T239?

This thread!

Again, so little noise for such a close release. (I very much WANT a closer release)

darthdiablo

+5 Death Stare

- Pronouns

- he/him

Wasn't that video talking about Tegra X1, a non-custom chip that you can get off the shelf? I don't think any other specs were talked about. In fact, DF wasn't even sure which Tegra X1 variant would be used for Switch.The digital foundry video linked in the article talks about specs.

Edit: It was a fun trip down the memory lane though. Switch was cutting edge handheld for its time in 2017.

- Pronouns

- He/Him

Dev kits? Now that's the least likely thing, as there seem to be quite a lot already in dev hands.

It's either a Switch January 2017 presentation for ReDraketed, or launch. Anything else isn't really info devs, especially indie devs that were around at the Gamescom demo, would need.

Also, everyone talks about there would be a lot of leaks. We just had two, and we had a leak for a lot of the technical details in the nVidia leak.

It’s definitely not dev kits, but I’m not confident it will be release either. My guess is a reveal or a developer deadline for launch window games and then a reveal shortly after. In either case, we’ll probably see the release itself not so long after and ahead of the other big two releasing their updated consoles.

We’re in the end game now. In my mind it’s just a question of where between the 6 and 12 months we are. Either way, with how long this discussion has been ongoing, the goal line finally feels in sight.

Shoulder

Koopa

I’m on page 1607, so I’m quite behind on much of this talk, but wanted to chime in briefly concerning the Apple news with iPhone 15 playing AAA games.

Yes, the phone can play games. It can answer phone calls, do texting, install apps of limitless potential, etc. It's a modern computer in the sense of the word for better or worse.

On a similar vain, a 1/2 Ton Crew Cab pick up Truck can haul all your goods, tow almost whatever you throw at it these days, can carry your entire family, has all the creature comforts and amenities you expect, they ride relatively well for their size, etc. It’s the modern Automobile in the sense of the word for better or worse. But like a smartphone, most people don’t really use trucks to haul heavy loads, or tow big things. The iPhone 15 may be able to play games, but I believe most people don’t really use smartphones to play AAA games. They use it for other purposes.

The marketing suggests an iPhone, and a Truck can do all these things, and has all these features. But will folks actually use them? Not really.

Could be wrong, but I don’t see Apple coming for Nintendo's market share in the gaming market. And even if it does, then I think the entire gaming industry ought to take notice as well because it won't stop there for handhelds. It'll bleed it’s way onto the TV console spectrum.

Yes, the phone can play games. It can answer phone calls, do texting, install apps of limitless potential, etc. It's a modern computer in the sense of the word for better or worse.

On a similar vain, a 1/2 Ton Crew Cab pick up Truck can haul all your goods, tow almost whatever you throw at it these days, can carry your entire family, has all the creature comforts and amenities you expect, they ride relatively well for their size, etc. It’s the modern Automobile in the sense of the word for better or worse. But like a smartphone, most people don’t really use trucks to haul heavy loads, or tow big things. The iPhone 15 may be able to play games, but I believe most people don’t really use smartphones to play AAA games. They use it for other purposes.

The marketing suggests an iPhone, and a Truck can do all these things, and has all these features. But will folks actually use them? Not really.

Could be wrong, but I don’t see Apple coming for Nintendo's market share in the gaming market. And even if it does, then I think the entire gaming industry ought to take notice as well because it won't stop there for handhelds. It'll bleed it’s way onto the TV console spectrum.

D

Deleted member 3433

Guest

Heh, I remember seeing the first footage of Dragon Quest XI in 2015, thinking, there's no way that the game will be possible on Nintendo's new hardware, the NX, with a PlayStation 4 release on the horizon. The Switch really surpassed many of my expectations.

D

Deleted member 5146

Guest

Not entirely true, there have been several released independently of the 'big three' in the April-to-August period, and with Nintendo specifically the Game Boy released in April of 1989 in Japan and the Americas in July, the Virtual Boy in summer of 1995, then the Game Boy Advance in Europe and the Americas in June of 2001, and the DSi outside of Asia in April of 2008The only game console originally released in the middle of the year (April to August) in the last 34 years is the N64 due to Mario 64 delays.

It's possible the Switch 2 breaks this trend, but I would expect September 2024.

Even then, there is an additional frequency of Nintendo handhelds and Q1 releases; 3DS in February (Japan) and March (Europe/Americas), the DS Lite in March, the Switch worldwide in March, Switch 2 would be far more at home in March than September

Thraktor

"[✄]. [✄]. [✄]. [✄]." -Microsoft

- Pronouns

- He/Him

GRAAAAAA NEW DOCTRE DROPPED

We already new about the job listing tho : P

I don't think this is related to DLSS. We know from the Nvidia hack that DLSS was already integrated via the NVN2 API on their side, and Nvidia's existing DLSS implementation is likely already extremely well optimised for the Ampere architecture. There's not a whole lot for Nintendo to do there other than test and provide feedback.

And no, before anyone asks, it's got nothing to do with ChatGPT either. Models like ChatGPT are Large Language Models (LLMs), and are far too big to run on a portable device like Switch 2, even if they were of any use in a videogame, which is debatable. Machine learning is a lot more than just DLSS and ChatGPT.

Nintendo are about to release the first gaming system with dedicated machine learning acceleration hardware. This means that developers, both inside and outside Nintendo, are going to have access to functionality they haven't had before, and are going to need assistance to effectively use it. Game developers are good at optimising for hardware with limited performance and memory, but most don't have any expertise in machine learning. Meanwhile, ML experts know how to design and train models, but aren't typically experts in optimising for hardware like games consoles. That's where the people applying for the job below would come in.

Here's the job description:

We at Nintendo are looking for a Data Engineer to help with integration of machine learning technologies on low-power embedded platforms. You will be working at the intersection of machine learning inference engines and embedded systems, facing challenges that stem from processing and memory constraints and a power budget. Tasks include, but are not limited to, porting of machine learning frameworks to embedded platforms, evaluation and benchmarking of machine learning hardware solutions, selection and optimization of machine learning models to fit power, memory, and CPU budgets.

DESCRIPTION OF DUTIES:

- Design, develop and maintain a formal framework for validating and benchmarking machine learning solutions.

- Perform evaluations of machine learning hardware.

- Research, evaluate, analyze and optimize machine learning models.

Let's say you're a game developer, and you've got a colleague with a background in machine learning, and together you think that you've come up with a neat ML-based solution for, say, character animation, and you want to run it on Switch 2. Your first problem will be just physically getting it to run on the hardware at all. You're most likely creating and training the model using a framework like PyTorch, using Python. You wouldn't want to actually run Python on a games console, and besides, PyTorch doesn't exactly list "Nintendo's unreleased game console" as compatible hardware.

So, first you need a way to take a model that's been created and trained in PyTorch and run it on the Switch hardware. This means compiling the model for the Ampere GPU, and it's not entirely different than writing code in a language like C++ and compiling it for an x86 or ARM CPU. Except, unlike compiling C++ code, where you can easily find and use any number of compilers (although Nintendo likely provides an official one), there aren't exactly a range of easy to use high-performance ML compilers around. In fact, part of Nvidia's dominance in the AI space is a result (and/or cause) of this problem, as many AI implementations are dependent on Nvidia's libraries like cuDNN which are pre-compiled for Nvidia's GPUs.

There has been some movement in the last couple of years to open-source, hardware-agnostic machine learning frameworks. OpenAI's Triton is the one which seems to be gaining the most traction, and it includes a language for defining the execution of ML models, an intermediate representation (based on LLVM-IR, for those curious) and a compiler. PyTorch now utilises Triton as a backend for their compiler module when used on GPUs. Unfortunately, these still don't fully solve the problem for a device like the Switch, as they're built around JIT (just-in-time) compilation, where code is compiled on the device immediately before it runs. This isn't a problem for big models, where compilation is far quicker than the execution of the model itself, but on a games console you want to save every bit of frame-time you can get, so ahead-of-time compilation is a must.

Nintendo needs a solution to this, both for their own developers, and to provide to third parties. When the job description says "porting of machine learning frameworks to embedded platforms", this is the kind of thing they're talking about; taking a popular machine learning framework like PyTorch and providing a way to take models from it and compile them for the Switch 2's GPU. I wouldn't be surprised if they're using open-source software like Triton for this and updating it for their own purposes, like pre-compilation and optimisation for Switch 2's hardware.

Even once you've got your model compiled and running on the hardware, that's only the first step. You then need to get it running well. Creating ML models is an iterative process, which generally involves repeatedly tweaking and re-training the model until you get the accuracy or performance you need, or just can't squeeze any more out of it. For an embedded system like a games console, performance is vital, and at each step you need to test performance on the embedded hardware itself (ie your Switch 2 dev kit), not just the GPU on the workstation or server you're using. This means you need a way to actually measure and profile the performance of your ML code on the hardware, or as the job description puts it a "framework for validating and benchmarking machine learning solutions".

TLDR: The Switch 2 has ML accelerators (tensor cores). For developers to actually use them effectively, Nintendo needs people like the applicants for this job to make sure their ML models both run and perform well on the hardware.

As a separate note, as DLSS and ChatGPT seem to be almost the only things brought up regarding uses for machine learning, I feel I should point people to some of the research out there on the many other potential uses for ML in video games. Both Ubisoft (here) and EA (here) have done quite a bit of research and published several papers in the area. Some promising avenues include animation (here and here), physics (here) and pathfinding (here). These are just a few examples from a single team of researchers, there's a lot more out there. There have also been several games which have already shipped with some use of ML, even without dedicated hardware, for instance cloth physics in Madden 21 and texture upscaling in God of War Ragnarok. (The GoW presentation also illustrates how difficult it is to optimise an ML model for a platform when you don't have a good compiler).

In general, anything that can be computed can be implemented via machine learning. In many (or most) cases the existing approach is going to be superior in terms of performance and/or quality, but ML is often pretty well suited as a "good enough" solution, where it's not strictly as accurate as an existing method, but can run quicker and produces results which are close enough that people generally can't tell the difference. Video games are built on "good enough" solutions, where any time you talk about a game being well optimised or looking good for its hardware, it's because the developers have come up with the cheapest possible way to do things that still looks "good enough" to the player. I don't expect ML to completely overtake traditional techniques in gaming, but I do think there are going to be a lot of little places where a fast ML implementation can be "good enough" and be a better choice than existing non-ML techniques. As Switch 2 will be the first hardware with dedicated acceleration for these tasks, it will be at the forefront of developers implementing these new techniques.

Last edited:

D

Deleted member 2

Guest

nate the hate answering a patreon question, c. 2023 (colourised)

Interesting that a photo taken in 2023 was taken in black & white

D

Deleted member 2

Guest

i don't get itknock knock

who’s there?

leaks

leaks who?

all the leaks we’ve got over the last couple of weeks, calm it will ya, there’s another big gaming convention starting Thursday.

theguy

Chain Chomp

same tbhi don't get it

Raccoon

Fox Brigade

- Pronouns

- He/Him

actually the colourisation was of the green screen in the back. sorry I should have been more clearInteresting that a photo taken in 2023 was taken in black & white

darthdiablo

+5 Death Stare

- Pronouns

- he/him

TGS. But I've tempered my expectations of hearing about any leaks from that one, Japanese devs are notoriously more tight-lipped. If we do get something that'd be a pleasant surprise.i don't get it

D

Deleted member 2

Guest

ah yes of course, thank youactually the colourisation was of the green screen in the back. sorry I should have been more clear

D

Deleted member 2

Guest

i meant i don't get the knock knock jokeTGS. But I've tempered my expectations of hearing about any leaks from that one, Japanese devs are notoriously more tight-lipped. If we do get something that'd be a pleasant surprise.

Raccoon

Fox Brigade

- Pronouns

- He/Him

always happy to helpah yes of course, thank you

Goodtwin

Like Like

The big info dump for Switch happened in July of 2016. That is when Eurogamer/Digital Foundry reported on it being a portable console with detachable controllers that uses a Tegra SOC. It was a few months later when they became confident it was a standard Tegra X1 SOC. It was basically eight months prior to release when the details started to flow. Since its basically a lock that this will be a more straightforward successor, there are less noteworthy details to leak. There is no point in "leaking" details about Nintendo's new hardware having detachable controllers and being a portable console that can dock, that isnt news worthy for obvious reasons.

TheSentry

Tektite

Is there anything to be concerned over in his harping on about the 'long game' and boasting of using an affiliated entity to try buying up Nintendo shares to increase influence and pressure a sale? Hopefully Nintendo respond accordingly and retaliate appropriately to this whatever way they can, whether its by cutting Microsoft out of their future business partnerships or ousting the conspiring shareholders and placing a ban on stock sales to any entity with a Microsoft connection

Between the success of their IP being used outside of video games like the theme park and Mario movie, plus their stake in Pokémon (one of if not the most popular IP in the world), plus the success of the Switch…. I won’t be surprised if some companies try to make aggressive moves to purchase Nintendo from the Saudi's who already bought significant stock in Nintendo, Microsoft who has openly talked about acquiring them, and some powerful Chinese company like Tencent.

Thankfully, Japan apparently has some laws or strick red tape that is in the way of non-Japanese companies acquiring Japanese companies. I’m sure Japan would see it in their best interest to keep Nintendo domestically owned. Nintendo is like their Disney and a big part of their cultural and economic identity. So much so they highlighted Nintendo/Mario in them receiving the 2020 (2021) Olympics!

But it’s a real issue going forward that Nintenso will have to be conscious of. I personally don’t get the impression they ever want to be purchased or acquired. So it would have to be a hostile takeover, much like Ubisoft had to endure years ago.

Lancelot

Like Like

Spyro isn't even their IP anymore. Neither of those games were hits compared to their actual flagship titles though, it is fair to say it's never been their focus in the last ten years (and it's better that way imo).Ratchet and Clank, Astro's Playroom, Sackboy's adventure, Spyro Reunited etc. say Hi.

D

Deleted member 5146

Guest

Never got to live the anticipation or speculation cycle of the Switch ahead of its release, how did reception to the detachable controllers thing go down?The big info dump for Switch happened in July of 2016. That is when Eurogamer/Digital Foundry reported on it being a portable console with detachable controllers that uses a Tegra SOC. It was a few months later when they became confident it was a standard Tegra X1 SOC. It was basically eight months prior to release when the details started to flow. Since its basically a lock that this will be a more straightforward successor, there are less noteworthy details to leak. There is no point in "leaking" details about Nintendo's new hardware having detachable controllers and being a portable console that can dock, that isnt news worthy for obvious reasons.

Armoured_Bear

Like Like

- Pronouns

- he

Yeah… no. Eurogamer have leaked the exact specs of Xbox One, PS4, PS4 Pro, Xbox One X, Switch, Xbox One X and PS5 all way ahead of their official releases.

Nintendo Switch CPU and GPU clock speeds revealed

Spec reveals are never easy. Months - sometimes years - of anticipation build after initial teasers. Rumours circulate,…

3 months before release.

- Pronouns

- He/Him

Yeah… no. Eurogamer have leaked the exact specs of Xbox One, PS4, PS4 Pro, Xbox One X, Switch, Xbox One X and PS5 all way ahead of their official releases.

Eurogamer leaked that Switch was using TX1 in July of 2016, 3 months before Switch was announced. Nothing about the clocks was known until December 2016, after the Switch was announced.

Eurogamer/Digital Foundry have said for about a year now that the Switch 2 will be using T239, which was leaked in the Lapsus hack in February 2022. We have no information on clocks but we know everything else about the chip, well over 3 months before it launched.

I'm not sure what else people are expecting to leak. We have leaks of a 1080p 8 inch LCD screen, and possibly a camera. What else do you think there is to leak? We know virtually everything outside of launch timing, price and clock speeds.

Lugia667

Darknut

- Pronouns

- He/Him

you missed thisNever got to live the anticipation or speculation cycle of the Switch ahead of its release, how did reception to the detachable controllers thing go down?

/cdn.vox-cdn.com/uploads/chorus_image/image/49163473/nintendo_nx_fake.0.0.jpg)

- Pronouns

- He/Him

you missed this

/cdn.vox-cdn.com/uploads/chorus_image/image/49163473/nintendo_nx_fake.0.0.jpg)

Ah yes, the trees.

darthdiablo

+5 Death Stare

- Pronouns

- he/him

Less "expecting", more "hoping for" a leak that tells us which node process T239 SOC was made on.I'm not sure what else people are expecting to leak. We have leaks of a 1080p 8 inch LCD screen, and possibly a camera. What else do you think there is to leak? We know virtually everything outside of launch timing, price and clock speeds.

D

Deleted member 5146

Guest

Whatever that is, I like ityou missed this

/cdn.vox-cdn.com/uploads/chorus_image/image/49163473/nintendo_nx_fake.0.0.jpg)

qwerp

Moblin

The differences from the Switch 1 would be news worthy. Simply reporting it is releasing in March is news worthy.The big info dump for Switch happened in July of 2016. That is when Eurogamer/Digital Foundry reported on it being a portable console with detachable controllers that uses a Tegra SOC. It was a few months later when they became confident it was a standard Tegra X1 SOC. It was basically eight months prior to release when the details started to flow. Since its basically a lock that this will be a more straightforward successor, there are less noteworthy details to leak. There is no point in "leaking" details about Nintendo's new hardware having detachable controllers and being a portable console that can dock, that isnt news worthy for obvious reasons.

- Pronouns

- He/Him

Less "expecting", more "hoping for" a leak that tells us which node process T239 SOC was made on.

Technically there's a leak for that too, if you are open to believe kopite and don't think the person has old / outdated / mixed up info.

- Pronouns

- He/Him

Nobody leaked the node the Switch was using, it was thought to be 16nm for a while. It was only determined to be 20nm after a teardown.Less "expecting", more "hoping for" a leak that tells us which node process T239 SOC was made on.

I expect the same thing will happen here, we probably won't know for sure until a die shot.

Good news, we have the reports of a 1080p 8inch screen and possibly a camera. And reports of March being noteworthy in some way, as well as plenty of expectations for launch in H1 2024.The differences from the Switch 1 would be news worthy. Simply reporting it is releasing in March is news worthy.

qwerp

Moblin

Plenty is putting it nicely.Good news, we have the reports of a 1080p 8inch screen and possibly a camera. And reports of March being noteworthy in some way, as well as plenty of expectations for launch in H1 2024.

Nate is the only one who specifically said March. Even then, he did not know the context.

Armoured_Bear

Like Like

- Pronouns

- he

OK, Kona: Bridge of Spirits, Concrete Genie aren't bloody death simulators, are they.Spyro isn't even their IP anymore. Neither of those games were hits compared to their actual flagship titles though, it is fair to say it's never been their focus in the last ten years (and it's better that way imo).

We should get away from the Nintendo = only cutesy, the other 2 = only violent realistic games.

darthdiablo

+5 Death Stare

- Pronouns

- he/him

Considering kopite got multiple details wrong about T239 (cores and codename), I've already heavily discounted anything coming from him. He also got details about other non-T239 chip wrong as well.Technically there's a leak for that too, if you are open to believe kopite and don't think the person has old / outdated / mixed up info.

We'll see whether or not kopite ends up being right. Personally TSMC 4N makes much more sense, but if it's SEC8N, I guess it's a miracle they somehow got it working, if it's still 12 SM GPU, which we are pretty sure about based on NVN2 leak.

theguy

Chain Chomp

there was a list of articles/sources with who was saying h1 and h2, in this thread.Plenty is putting it nicely.

Nate is the only one who specifically said March. Even then, he did not know the context.

if memory serves, there were more of them stating h1 than h2. which is definitely plenty. you are correct in saying that it was only Nate stating March.

darthdiablo

+5 Death Stare

- Pronouns

- he/him

How are we feeling about March reveal, May launch? Considering Microsoft and Playstation both seem to have plans for 2H.

qwerp

Moblin

Yes, I have seen it and commented on it before. The majority of those saying H1 were just analysts or had poor track records.there was a list of articles/sources with who was saying h1 and h2, in this thread.

if memory serves, there were more of them stating h1 than h2. which is definitely plenty. you are correct in saying that it was only Nate stating March.

2 months is not long enough between a reveal and launch.How are we feeling about March reveal, May launch? Considering Microsoft and Playstation both seem to have plans for 2H.

darthdiablo

+5 Death Stare

- Pronouns

- he/him

Yeah, that crossed my mind. There was 4.5 months between October 2016 reveal and March 2017 release.Yes, I have seen it and commented on it before. The majority of those saying H1 were just analysts or had poor track records.

2 months is not long enough between a reveal and launch.

If 2 months isn't enough, I feel like that'd bump it all the way to September 2024 (assuming March is the reveal). A bit crowded in 2H, seems risky to me. I cannot see Nintendo launching anything during summer but Nintendo might surprise me yet again.

mjayer

Bob-omb

You goI clicked it now what

Concernt

Optimism is non-negotiable

- Pronouns

- She/Her

There is a deafening amount of noise. If you can't hear it, consider if you want to, or if you choose to tune it out, out of pessimism.When did eurogamer report on the OLED model?

Again, so little noise for such a close release. (I very much WANT a closer release)

Blue Monty

Inkling

- Pronouns

- he/him

Kona is a 3rd party game they made a temp exclusivity deal for and they just closed this year the studio behind Concrete GenieOK, Kona: Bridge of Spirits, Concrete Genie aren't bloody death simulators, are they.

Lancelot

Like Like

Not a first party game...? Do you know SIE shut down Concrete Genie's studio without hesitation behind the scenes? When talking about big three intentions, you quote their first parties, not their funded indies regardless of artstyle.OK, Kona: Bridge of Spirits, Concrete Genie aren't bloody death simulators, are they.

We should get away from the Nintendo = only cutesy, the other 2 = only violent realistic games.

It's quite possible. I think if we are to take eurogamer's report at face value Nintendo would like to get it out in H1 which makes me feel like it's a late H1 release. And if they think H1 is possible than if they have to delay release would it really until the holiday? Therefore, I do like that September release date with my almost convoluted approach to thinking about potential release dates lol.March sounds like the switch 2 presentation or reveal with a release in September

March doesn't feel like it'll be the release month but we'll probably know by the end of November.

Threadmarks

View all 18 threadmarks

Reader mode

Reader mode

Recent threadmarks

Poll #3: When do you think is the launch window for Nintendo's new hardware? Announcement regarding links to news and rumours from 2022 and 2021 Rough summary of the 2 August 2023 episode of Nate the Hate Rough summary of the 11 September 2023 episode of Nate the Hate Anatole's deep dive into a convolutional autoencoder Differences between T234 and T239 Rough summary of the 19 October 2023 episode of Nate the Hate necrolipe's Twitter (X) post on why a 2025 launch doesn't automatically make T239 outdated based on one of the leaked slides from MicrosoftPlease read this new, consolidated staff post before posting.

Furthermore, according to this follow-up post, all off-topic chat will be moderated.

Furthermore, according to this follow-up post, all off-topic chat will be moderated.

Last edited: