SNES > N64 > GC were the 3 biggest leaps.

I think in part because the industry itself was going through a shift. Both SNES/Genesis/TG-16 and consoles designed in the 1980s focused on pulling off the shelf parts and adding a custom chip to do what the mfg wanted to do. The Motorola 68000 in the Genesis was 10 years old by the time Genesis launched in 1989 and was widely adopted by home computers like Atari ST/Amiga as well as arcades at the time.

Yeah. The earlier generations were basically defined by large leaps in multiple areas, simultaneously.

Node shrinks were still huge leaps just by themselves. Moore's Law was fully active in the 80s, if you did nothing but ride the node shrink you could expect performance doubling every 18 months.

But we were also still coming up with new ideas and architectures at a crazy rate. There was money and research pouring into a new space - real time graphics - but also the personal computer explosion was still pushing CPU architectures forward.

And we were still learning software techniques too. There is a reason that you seemed to have generational leaps

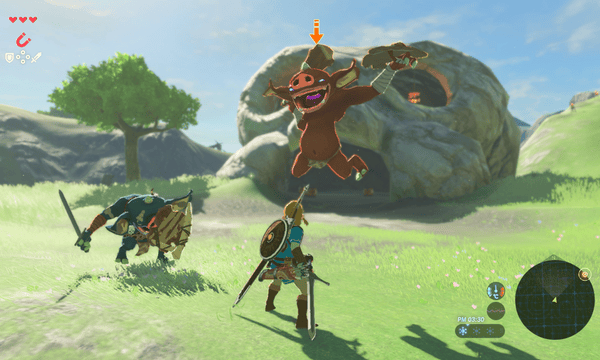

within the generation. The software side of real time graphics was still being learned and developed. You can see that with the guy who has been rebuilding

Super Mario 64 using modern techniques. He's pushing vastly more frames and polygons than the original game, on original hardware, just by applying 25 years of software know-how to the thing.

These things were not just additive, they were multiplicative. More transistors meant more room for crazy new hardware designs, which fueled new experimentation with software techniques, which informed the next generation of hardware designs.

That's where this meme of "learning the hardware" comes from. There are still little tricks and optimizations buried inside each console, but for the most part, there isn't the big leaps in software techniques happening over the course of the generation.

Now we live in an era where the movie and television industry (see: Pixar) has already figured out the next generation of software techniques, because they can throw more and more hardware at the problem, and they use the same tools as the game industry (see: Unreal). It's just waiting for the hardware to catch up, and the hardware is limited by node shrinks.

If we still had Moore's Law era shrinks, we'd be into real time path tracing on consoles already. Just as we got rid of 2D acceleration hardware, we'd get rid of current 3D hardware, and just reimplement the visuals (or even emulate it) on top of a GPU made entirely of ray tracing cores. We know how to do it software wise, we even have pretty good architecture designs, there just isn't enough transistors to put it in a device that fits in a computer, much less under your TV.