When you put it like that…. Timing wise… I can see what

@BlackTangMaster and

@Thraktor meant by a possible change to a newer node.

Nintendo opting to spend for a new node to enable a “cheaper” or “easier” process of getting a die shrink a few years later I can see happening. Nintendo opting to use a node and then spending a lot to have it on a different node only to position it as a revision of sort is something I can’t see them do due to it making less business sense

In 2019 two models were reported, but in 2021 we had news that one of the supposed aforementioned models hasn’t been taped out yet. It could be that it wasn’t taped out because Nintendo decided “hmm, let’s have this on a node that allows us to work with our future endeavors in mind and this fits with our overall plans for the platform without having to spend an unnecessary funds mid way for it”

For a chip that is supposedly of this size, a tape out taking this long is rather unheard of I think. Unless something went pretty badly.

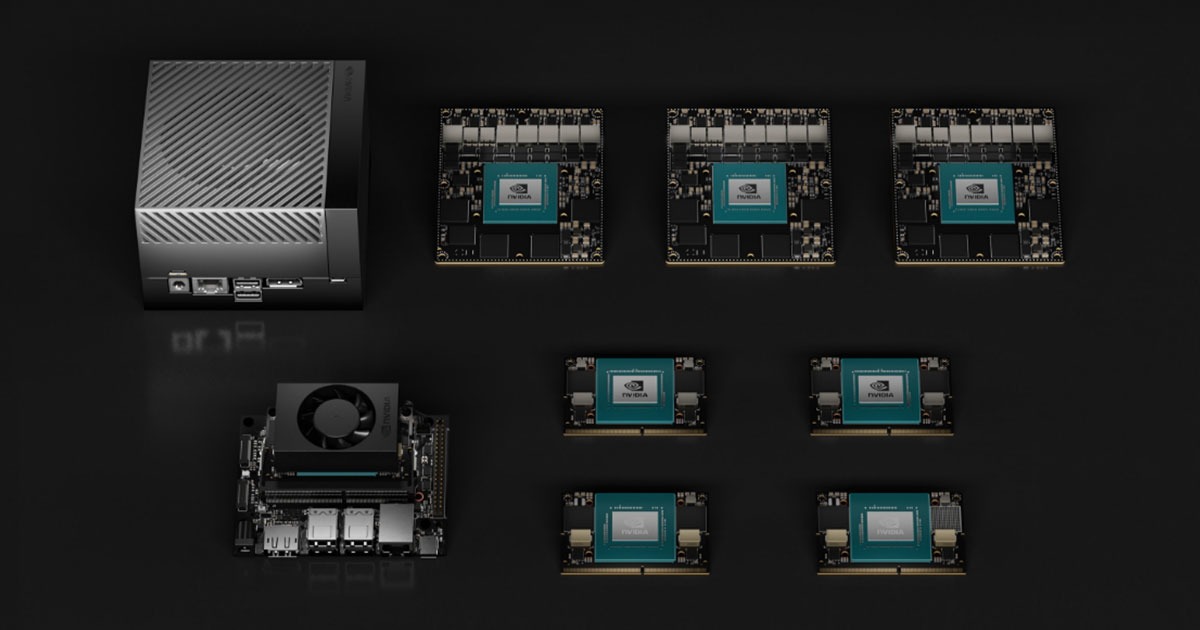

Although not a SoC, nVidia had the 30 series GPUs taped out and for sale in less than a year, a few months really at least according to the tweet from Kopite7kimi that included a Tegra on 8nm DUV.

But, if I’m not mistaken, the Tegra X1 in the switch was taped out mid 2016 for a planned release in 2016 that was delayed to 2017.

Tegra Orin was announced back in 2019, the same year that the reporting of a newer model that is stronger came out to the party. A new model was datamined prior that resulted in the OLED model coming out.

To conclude, due to what is presumably a chip this small (suited for the form factor of the switch) taking this long, and we already heard of rumblings about it since

way back before the info of tape out happened, it’s very possible that in this time Nintendo opted to fund for a change to the node. Nintendo had 2020 which was pandemic year, a delay could have happened that pushes the chip to much later than expected date of say, 21 to say 23-24. 3-4 years could be enough to do that if they did in fact do that.

This does not necessarily intervene with the information of developer kits supposedly being out, contrary to that. It could be early dev kits based on the old hardware (8nm) that can function fine if the new hardware will have the same parts or function but on a better node (ex: 7nm). So long as the dev kit is clocked appropriately or what have you for development.

Then again, these are devkits, Nintendo could replace them as they please or remove them if they scrap the successor entirely.

They don’t really need to go that far out to a newer node to have enough to where they can shrink it a few years down. 7nm and an eventual 5nm or hell, “4nm” is more than enough to give them more efficiency if they want (or a perf boost by clock it higher).

Edit: I am not saying 8nm is a bad node! It’s fine, but I would not rule out a 7nm or 6nm node really.

As an aside,

@brainchild will you be at GDC this year? And do you need to go to gather more info on how mesh shaders plays a part with memory bandwidth utilization? (Or do you already know that? lol) I’m curious is to know if it being a more efficient pipeline for geometry culling, if it somehow alleviates the memory bandwidth situation a bit or if that’s difficult to answer. Unsure if my question is clear. This is more off topic with the current discussion, but curious on the MS and memory bandwidth here. If we are to assume the next switch has this feature on the hardware level but also concern with memory bandwidth, this could be one of the things that aids in that department to use less overall. But I could be reading it wrong since it’s a method for geometry culling .

Long winded!