Cortex-A78 Efficiency Comparison Table by Process // Samsung Foundry

- 엑시노스1480 specint06 결과가 올라와서 같은 아키텍처쓰는 제품들 IPC, 전성비(power efficiency)를 비교해봤음. 변수에 대한 보정없이 아키텍처가 같다는 것만으로 IPC, 전성비 값 비교하면서 본인 입맛대로 결론내리는 경우가 많은데 바로잡을 필요가 있음. - 테스트 결과 소스 (https://twitter.com/negativeonehero/status/1775866791656603728) 엑시노스1480 테스트 세트 전력에서 아이들 전력을 빼야 코어 전력을 알 수 있는데 엑시노스1480은 아이들...

gamma0burst.tistory.com

How does it compare to PS5?

Gosh darn it, I didn't want to bring this up, but since it's come up, I'll have to touch upon it, don't I?

First, I do have to complain, why does it have be SPEC 2006 instead of 2017? (I probably know why)

Alright, intro: you follow PC hardware enough, you'll see assorted CPU benchmarks.

Cinebench - free, and I'm under the impression that it's really easy to use as well? Very common to see. It's one specific workload though, so do not use it to gauge 'overall/general purpose' performance. Not all reviewers are necessarily great at getting that across to the audience...

Interestingly enough, Cinebench is regarded as actually having practical value as a test of your

cooling. You see, it turns out that Cinebench is a

realistically heavy, all core, AVX workload.

Geekbench - free and is designed to be a collection of workloads to represent real world, general purpose usage. Also common to see. Thumbs up for it as long as we remember that it's for 'general purpose computers' and not 'dedicated gaming devices'.

BTW, has anybody else paid attention to the 4700S and 4800S scores? (4700S is the PS5's chip with disabled igpu while the 4800S is the Series X counterpart)

For those who do, did you notice that the 4800S tends to score slightly higher in single core? And if so, have you ever wondered why? My impression is that the main difference lies in a couple of specific tests that turn out to be SIMD (Single

Input Instruction, Multiple Data; ie vectors) heavy. And I think that would line up with a specific customization that was done for the Zen 2 core in the PS5. There was a bit of cost optimization done; the FPU is cut down by a bit. I think that the cuts were mainly to SIMD capability?

(click

here for Chipsandcheese's interpretation of the PS5's FPU)

And one last bit, in the category of 'who else on the internet would make this specific leap in logic?': Mark Cerny deciding that this cut to SIMD capability will presumably not adversely affect the PS5 leads me to think that the odds will be lower for the A78's 2x128 bit fp/vector throughput being an issue for games.

SPEC - this one is regarded as the industry standard. Relative to the above two, you don't see anywhere near as many outlets use this suite. Why? Well, a SPEC2017 license costs

$1000 USD. And for a lot of mainstream outlets, given their target demographics, that's a thousand dollars that won't move the needle. Cause hey, sometimes we consumers implicitly encourage the garbage we get, right?

So yea, I can believe that the poster of the SPECint2006 scores not spending for a 2017 license. Why the lack of floating point though?

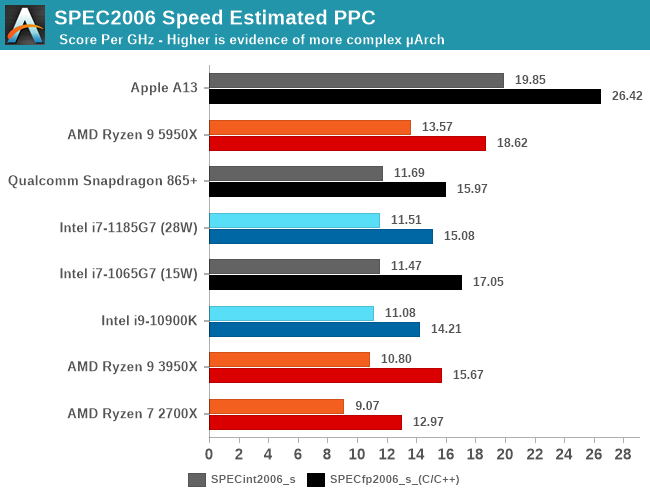

Alright, one last reminder: SPEC2006 is old. It has been deprecated by 2017. Now to post the image...

Source:

https://www.anandtech.com/show/1621...e-review-5950x-5900x-5800x-and-5700x-tested/9

Snapdragon 865+ should be using the A77. The A78 should be about +7% over that.

Reminder that when looking at the 3950X, that's chiplet Zen 2. Therefore, a given core would have access to 16 MB of L3 cache. The PS5/Series use monolithic Zen 2; a given core there would have access to

4 MB of L3 cache*. That would

very likely have an impact on this benchmark. I say that because I

think that there's a noticeable difference between chiplet and monolithic Zen 3 SPEC2017 scores elsewhere (for Zen 3, it'd be 32 MB vs 16 MB).

I should note: I think that Sunny Cove (the i7 1065G7) and Willow Cove (the i7 1185G7) have unusual scores in that chart. If you scroll down far enough, you'll see some SPEC2017 scores, and Sunny Cove/Willow Cove do better in integer there than they did in 2016. And of course, Willow Cove's floating point score for 2006 is bizarrely low. Willow Cove, being a refinement of Sunny Cove, shouldn't differ too much from the latter.

It's fine enough to observe SPEC2006 scores and file it away in your head. I just don't particularly like

using them, given the awareness that they've been deprecated for years, yaknow?

Shame that I can't find normalized SPEC2017 scores for ARM cores

*for those who joined in the time I've been gone; one of the reoccurring points I'll bring up with regards to the PS5/Series is that I think that the 4 MB of L3 cache per CCX is a sore spot.

I like to imply that the PS5/Series' CPU is sort of a 'paper tiger', if you will. ~3.5 ghz of Zen 2 sounds great, right? Now, I'm not saying that it's bad. It's still plenty fine! But generally speaking, when people read or hear 'Zen 2', they're thinking 'desktop Zen 2'. They're thinking of 'chiplet Zen 2 with the 16 MB of L3 cache per CCX paired with DDR memory'. They're not thinking of 'monolithic, therefore L3 cache reduced to

4 MB per CCX with GDDR memory, whose latency can potentially further ding the CPU's performance'. There's a lot of opportunity for wasted clock cycles there, relative to preconceptions.